What’s the best incentive to attract high-quality participants who show up to your study? When it comes to recruiting quality participants, incentives definitely matter. We looked at a sample of 25,000+ completed research sessions run on the User Interviews platform to help answer the question of what incentives to offer to generate the best outcomes for your research.

Since you’re working with finite time and research budgets, it’s important to get this right. You don’t want to spend money you don’t need to, nor do you want to be overly stingy and pay for it with your time and no shows.

We hope this report will help you choose the best incentive for your research recruiting, whether they are in-person or remote studies, with professional or consumer participants, shorter or longer sessions.

As with many things in research, the right answer depends. How niche is your audience? How much are they motivated by a cash incentive versus some other kind of thank you gift? Incentives are a nice lever you can experiment with as you recruit different audiences for your research. You can use this data to find helpful starting points in terms of averages and inflection points, where increasing incentives makes a big difference or actually results in diminishing returns. But know that increasing your incentive, incrementally if you have the time and inclination, can make a big difference in attracting participants to your study. We’ve also created a calculator to help you easily apply our learnings to future studies (you’re welcome 😉).

P.S. - Check out our User Research Incentive Calculator to calculate the best incentive to attract participants

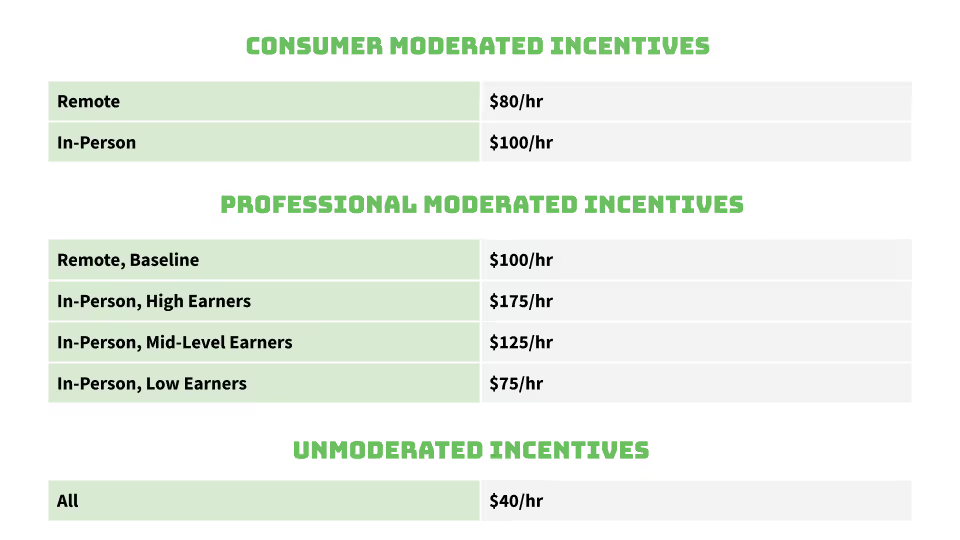

Since we’re not the type to bury the lede, here are the recommendations we’ve settled on based on the data we collected and the analysis we conducted.

First, we set recommendations for incentives based on what type of participants you need to talk to, where your sessions will take place, and whether your sessions are moderated or unmoderated.

Then, we looked at the effect the length of your session had on the incentive. We hypothesized that the same per-minute rate may not apply to shorter studies, and that there was a minimum incentive amount needed to get a participant in the door.

.avif)

Study Design and Terms

In our analysis, we found that incentives had a big impact on how many requested sessions were ultimately matched and completed—up to a point. There’s a threshold you need to provide to get people in the door, but after that, we’ve established a per hour rate that makes it easy to find a good baseline incentive, which you can adjust to fit your needs.

Our recommendations are meant to be starting points for a larger conversation about what incentive you should offer to get the best results from your research. There are obviously factors that the data in this report won’t account for, like how difficult your exact participants are to find, how meaningful cash or cash-like incentives are to them, how much time you’re asking them to commit overall, the nature of your study, and so on.

For example, asking users to come to your lab and participate in road-testing a self-driving car is very different than asking them to come to your office for a generative interview. You may need to play around with a few different incentives, ask around for recommendations in UXR communities, or use your own past data to make the best conclusion for you and your team. The most successful researchers we see pay the best incentive they can reasonably afford.

The majority of this report will cover moderated sessions. That’s because most of our customers conduct moderated research through our platform. It is also worth noting this data set is limited to U.S. and Canada based participants and incentives, as is our participant panel.

We also dove into which incentive we recommend for unmoderated sessions. When setting an incentive for your unmoderated test, think about how long it may take the participant to complete the task. Try it out with a few of your coworkers, or complete the task yourself to get a better idea of how long it may take an actual participant.

Methodology

To gather data for our analysis, we started with a sample of 25,000+ sessions, conducted on the User Interviews platform within the last year. We divided the sessions up based on the incentive they offered per hour, in intervals of $10.

We then looked at how many projects were completed at that rate, how many sessions were completed, how many sessions were requested, and how many no shows there were. We also looked at whether sessions occurred remotely or in-person, whether they were moderated or unmoderated, and what kind of participants they requested.

Terms

We use a variety of terms in this report, and since many of them can mean different things to different people, we’ll take a moment to define them, now.

Incentive

An incentive is a reward or thank you that you offer participants in exchange for taking part in your research. It can be anything–from a cool hat to cold hard cash. Since cash and cash-like incentives (think Amazon gift cards) are the most commonly offered types of incentives, this report is focused on those. Plus, they’re easiest to quantify.

Fill Rate

This one’s a little tricky. Fill rate is a term we use here at User Interviews to talk about the measure of how many participants a researcher requested versus how many sessions were actually completed. For example, if a researcher requested to talk to 10 participants, but only completed sessions with 8, that’s an 80% fill rate. There are many factors that can affect the fill rate.

No Show Rate

This rate measures how many people were confirmed for a session, but did not show up for that session. In this report, we’ve calculated it as the total number of no shows/total no shows + total completed sessions.

Consumer

When we say consumer, we’re talking about participants requested on our platform that do not have job title targeting. Of course, consumer participants often have jobs, and may also participate as professionals in other studies.

For example, if you’re running a study on grocery shopping and you need to talk to people between the ages of 25-35 who are the primary shoppers for their household, you’d be targeting consumers.

You’d also be targeting consumers if you want to talk to people who have recently purchased a specific kind of lawnmower, have kids between the ages of 6-8, or live in towns of less than 30,000 people. If they need to be landscape architects, we’re talking about professionals.

Professional

In this report, professional participants are people you need to target based on their job titles and skills. They are more difficult to match, and expect more compensation for their time.

For example, if you need to talk to Cardiologists in Chicago, you’re targeting professionals.

The same goes for targeting VPs of Marketing, Uber drivers, or Developers. These are all professionals, not be confused with “professional participants” who try to participate in studies as a primary source of income.

Remote

A remote study is any study that doesn’t require the participant and the researcher to be in the same physical location. This can mean a video chat, a phone call, or a recorded submission.

In-Person

An in-person study requires the participant and the researcher to be in the same physical location. Think having the participant come to your office for an interview, hosting an in-person focus group, or conducting ethnography research. In-person research can also include things like field studies, on-the-street research, or in-store shop-alongs.

Moderated Sessions

Moderated sessions are sessions that have a moderator present. This means a researcher, designer, PM, or anyone else who guides the participant through the task or questions at hand. Generative interviews, moderated usability tests, and field studies are all moderated research activities.

Unmoderated Sessions

Unmoderated sessions don’t have a moderator present. This means the participant completes the activity on their own, without the help of a researcher or moderator. Unmoderated usability tests, tree tests, diary studies, and first click tests are all examples of unmoderated tasks.

Key Research Incentive Findings

Below we evaluate remote, in-person, moderated, unmoderated, consumer, and professional study incentives across market dominance, fill rate, and no-show rate dimensions. Get your popcorn. Let’s dive in!

Moderated Consumer Research Incentives

Most Common Incentives

We looked at the percentage of completed moderated sessions through the User Interviews platform to see what most people are offering. In the chart below, you can see that the percentage of people offering less than $40/hour is fairly low, accounting for only 7% of total completed sessions.

The majority of people, 74%, offer between $60 and $100 per hour. The most common incentive, offered by 25%, was $100/hour. This graph also shows two other spikes, at $60/hour, accounting for 24% of completed sessions, and at $80/hour, accounting for 17% of completed sessions.

What does this mean for you? Well, if you want to be within the market range of what people typically offer for successfully completed moderated consumer studies, aim between $60 and $100/hour. If you’d like to be right on par with the market, $100/hour is the most common incentive among our sample set.

Best Incentives for a High Fill Rate

We also measured incentive success based on fill rate, to see which incentive would help you fill your study most reliably. The highest fill rate was shown at $90/hour, at 86%. However, $90/hour also had a low percentage of completed sessions, accounting for only 1.65% of completed sessions.

We’ll look at our three most commonly paid incentives, $60, $80, and $100/hour. When studies pay incentives of $60/hour, the fill rate is 77%. At $80/hour, the fill rate is 75%. At $100/hour, the fill rate is highest, at 80%. All in the same ballpark.

To get to a slightly more definitive recommendation, let’s slice the data one additional way—incentives compared to no show rates.

Best Incentives for a Low No Show Rate

Unsurprisingly, the highest no show rates appear when the lowest incentives are offered, and rates generally decline as incentives increase. When looking at $60, $80, and $100/hour, we can see that the lowest no show rate, 7%, appears at $100/hour. For studies that offered $60/hour, the no show rate was 10%, and at $80/hour, the no show rate was 8%.

Our Recommendation for Moderated Consumer Research

When we combine the three cuts of the data—fill rate, no show rate, market popularity—$100/hour stands out as the best option, ranking highest across all criteria. That said, anything in the range of $60-$100 is a solid baseline, all things being equal. Keep in mind, this section focused on all consumer sessions, not whether they were in-person or remote. Incentives are best when they’re tailored to the task you’re requesting your participants to complete, and where your study takes place is a part of that. Let’s dig into those details next.

Moderated Consumer Remote Incentives

Typically, researchers can offer lower incentives for remote research sessions, since they don’t require a participant to leave work, drive to a testing location, or spend money on subway fare. So if you’re on the fence about remote versus in-person sessions, pay close attention to the differences in average incentive offered.

Most Common Incentives

The majority of completed moderated consumer remote sessions, 75%, offered incentives between $60 and $100 per hour. The plurality of completed sessions, 33%, were completed with an incentive of $60/hour. Coming in a close second, with 23% of completed sessions, was an incentive of $80/hour. The third contender, $100/hour, accounted for 12% of completed sessions. We’ll start with that range and continue our pattern of looking at incentives across market standard, fill rate, and no show rate.

Best Incentives for a High Fill Rate

In the chart above, you can see our sample has a pretty close set of fill rates for moderated consumer remote studies. We’ll zoom in on the three most commonly offered rates, $60, $80, and $100/hour.

When the incentive was $60/hour, we saw an average fill rate of 81%. When it was higher, at $80/hour, the average fill rate was also higher, at 85%. When the incentive was highest, $100/hour, the average fill rate was 79%, though only 12% of sessions are represented at this rate, so it’s a smaller sample size. To determine which we recommend, we’ll dig into the no show rate as well.

Best Incentives for a Low No Show Rate

We saw the average no show rate decline as the incentives got higher. For studies offering $60/hour, the average no show rate was 10%. When the incentive was $80/hour, the average no show rate was 8%. When it was $100/hour, the no show rate was 6%.

Our Recommendation for Moderated Consumer Remote Research

Given that $80/hour provides the best combination of completed sessions, fill rate, and no show rate, our recommendation for moderated consumer remote sessions is $80/hour.

Moderated Consumer In-Person Incentives

Most Common Incentives

In-person studies typically offer a bit of a higher incentive since they require more effort on the part of the participant. The most popular incentive for completed moderated consumer in-person sessions is $100/hour, representing 42% of all sessions. The majority of sessions, 83%, were completed at incentive rates between $60 and $120/hour. $100/hour is clearly dominant in terms of what the market is offering, so let’s see what happens when we look at fill rates and no show rates.

Best Incentives for a High Fill Rate

We saw the highest fill rate, 88%, when an incentive of $90/hour was offered. But, $90/hour incentives only accounted for 2% of all completed sessions. The dominant incentive offered, $100/hour, had the second-highest fill rate, at 80%. So far, $100/hour seems like a winner, but to be confident we’re making the right recommendation, we’ll need to look at the no show rate as well.

Best Incentives for a Low No Show Rate

Unsurprisingly, we can again see that lower incentives line up with higher no show rates. The lowest no show rate, 4%, can be seen when researchers offer $80/hour. But, without a high fill rate and representing less than 10 percent of completed sessions, we can’t declare it our recommendation for this type of study. When we look at $100/hour, which dominated in completed sessions and had the second-best fill rate, it had a no show rate of 6%.

Our Recommendation for Moderated Consumer In-Person Research

Our recommendation is $100/hour. It dominates in terms of percentage of completed studies, has a great fill rate, and a good no show rate.

Moderated Professional Research Incentives

When it comes to offering research incentives for professionals, you may need to shell out a higher incentive to get the right people in the door. When we talk about professional participants, we mean we’re looking for people with a specific job title. This doesn’t mean our consumer population doesn’t have wonderful professionals in it, it’s about whether or not your research relies on their professional skills. For example, if you need to talk to Forklift Drivers, you’d need to find professional participants. The same goes for CEOs, Nurse Practitioners, Supply Chain Managers, Content Writers, etc.

Because being a professional can mean so many different things, it’s important to consider the day-to-day of the professionals you’re recruiting. What kind of incentive would mean something to them? Do they have a lunch break to talk to you? Do you need to see them in action? Again, the recommendations listed here are jumping-off points for a larger conversation about what kind of incentive would mean enough to your participants that they would drop what they’re doing to talk to you.

Our Project Coordinators, who have been helping researchers on User Interviews launch successful projects for combined 9+ years, have put together a really helpful incentive guide that outlines different incentive tiers for professional participants. We’d recommend referring back to it, in combination with the recommendations we make here, to provide the best, most reliably successful incentives for your professional studies.

Most Common Incentives

The majority of moderated professional studies, 80%, offered incentives ranging from $60 to $150 per hour. The most common incentive for completed moderated professional sessions, representing 29% of sessions, offered an incentive of $100/hour. The second most common incentive offered, with 13% of total sessions, was $60/hour. Coming in third was $150/hour, with 11% of total completed sessions.

Best Incentives for a High Fill Rate

We’ll look at our three most offered incentive rates, $60, $100, and $150/hour and their fill rates.

Studies offering $150/hour showed an average fill rate of 73%. Those offering incentives of $100/hour had an average fill rate of 70%. Lastly, studies that offered $60/hour showed an average fill rate of 75%.

Best Incentives for a Low No Show Rate

To determine which incentive rate we recommend, we’ll also look at no show rates. Not much separates our three contenders in average no show rate. Our first contender, $60/hour, shows a no show rate of 5%. Our second contender, $100/hour, shows a no show rate that’s slightly higher, at 6%. The third contender, $150/hour, has an average no show rate of 5%.

Our Recommendation for Moderated Professional Remote Research

Since $100/hour dominates in terms of completed sessions, and has competitive fill and no show rates as well, it’s our recommendation for professional moderated studies in general.

Here we’re talking about all professional sessions, but you will likely see the best results by breaking it down further. The incentive you offer your professional participants should be valuable to them, it should be something they read and say, “I can make what for 30 minutes of chatting with someone?”

Moderated Professional Remote Incentives

Professional remote sessions can be easier for both the researcher and the participant since they don’t have to leave their busy lives to participate in research, and that can mean a lower incentive is warranted too.

Most Common Incentives

The majority of completed moderated remote professional sessions, 80%, earned between $60 and $150/hour. When we looked at the percentage of total research sessions completed, $100/hour was the most common at 28%. The second most common, accounting for 14%, was $60/hour. The third most common was $150/hour, accounting for 11% of total completed sessions. Coming in fourth, accounting for 8% of all professional remote sessions, was $200/hour.

The fact that our four most popular professional remote incentives lines up with our overall professional incentives makes sense, since 90% of all moderated professional sessions in our sample were remote, compared to 10% of sessions that were in-person.

Best Incentives for a High Fill Rate

As with our other recommendations, we’ll use the fill and no show rates to determine our recommendations for moderated, remote, professional studies. Our three contenders, $60, $100, and $150/hour aren’t close in terms of how much you’re paying in incentives for a study of even 5 participants, so it’s important to take in all of the factors at play.

For studies offering $60/hour, the average fill rate was 76%. Studies that offered $100/hour saw a slightly lower average fill rate, 73%. The average fill rate for studies that offered the second highest incentive, $150/hour, was 75%. The average fill rate for studies offering the highest incentive, $200/hour, was 71%.

Since those numbers are quite close, let’s look at the no show rate to determine our final recommendation.

Best Incentives for a Low No Show Rate

The no show rates for our three contenders are also in the same ballpark. For studies offering an incentive of $60/hour, there was an average no show rate of 6%. The no show rate for studies offering an incentive of $100/hour was 9%. For studies offering the second highest incentive, $150/hour, the average no show rate was 8%. For studies offering the highest incentive, $200/hour, the average no show rate was 5%.

Our Recommendation for Moderated Professional Remote Research

Based on our data, we recommend a baseline incentive of $100/hour for professional remote studies. It had, by far, the most completed sessions, a fill and no show rate that was in line with the other incentives offered.

Because “professional” can mean different things to different people, we think there’s a larger story here. Our Project Coordinators actually have a set of recommendations based on their combined 9+ years of experience helping researchers launch successful studies on the User Interviews platform. Their experience backed recommendations actually break out incentives for professionals by low, mid, and high wage earners. Incentives work best when tailored to the participants you’re offering them to, and there’s a big gap between what an Uber driver and a lawyer expect to make in an hour.

They recommend $70-90/hour for low-wage workers, which includes people like retail workers and gig-economy workers. For mid-wage workers, like managers, developers, small business owners, and so on, they recommend $100-150/hour. For high-wage workers, like doctors, dentists, and lawyers, they recommend a range from $150-225/hour.

For the purposes of our calculator, we took the most popular incentives and mapped them to wage tiers. That translates to $60/hour for low wage, $100 for mid-wage, and $175/hour (the mid-point between $150 and $200, which had similar popularity at the upper end) for high wage professionals.

Moderated Professional In-Person

90% of all moderated professional sessions in our sample were remote, compared to 10% of sessions that were in-person. This leaves us a little hesitant to make data-backed recommendations for in-person professional sessions, so we’ll defer to our Project Coordinators recommendations, based on a combined total 9+ years of experience launching and managing projects on User Interviews. That means between $75-300/hour, depending on the income level of your professional participants. So, for low wage professionals, that’s between $75-100/hour. For mid-level earners, that means between $125-175/hour. For high earners, that means between $175-300/hour.

Most Common Incentives

Don’t worry, since this is, after all, a data report, we’ll still share that 11% of data. Based on our sample, the majority of sessions, 80%, offered between $80 and $200/hour. The plurality of in-person professional sessions, 36%, were completed at a rate of $100/hour. In second place was $150/hour, accounting for 14% of all completed sessions. Third went to $70/hour, which accounted for 13% of all completed sessions.

Our Recommendation for Moderated Professional In-Person Research

Since there is no clear pattern in this dataset, and it uses much less data, we’re most comfortable recommending based on our project coordinator’s professional advice, between $75-300 an hour, depending on the income level of your professional participants.

We’ll use an average of the range they provide. For low wage professionals, that means around $85/hour. For mid-level professionals, that means $150/hour. For high earners, that means $235/hour. Our calculator also uses these recommendations.

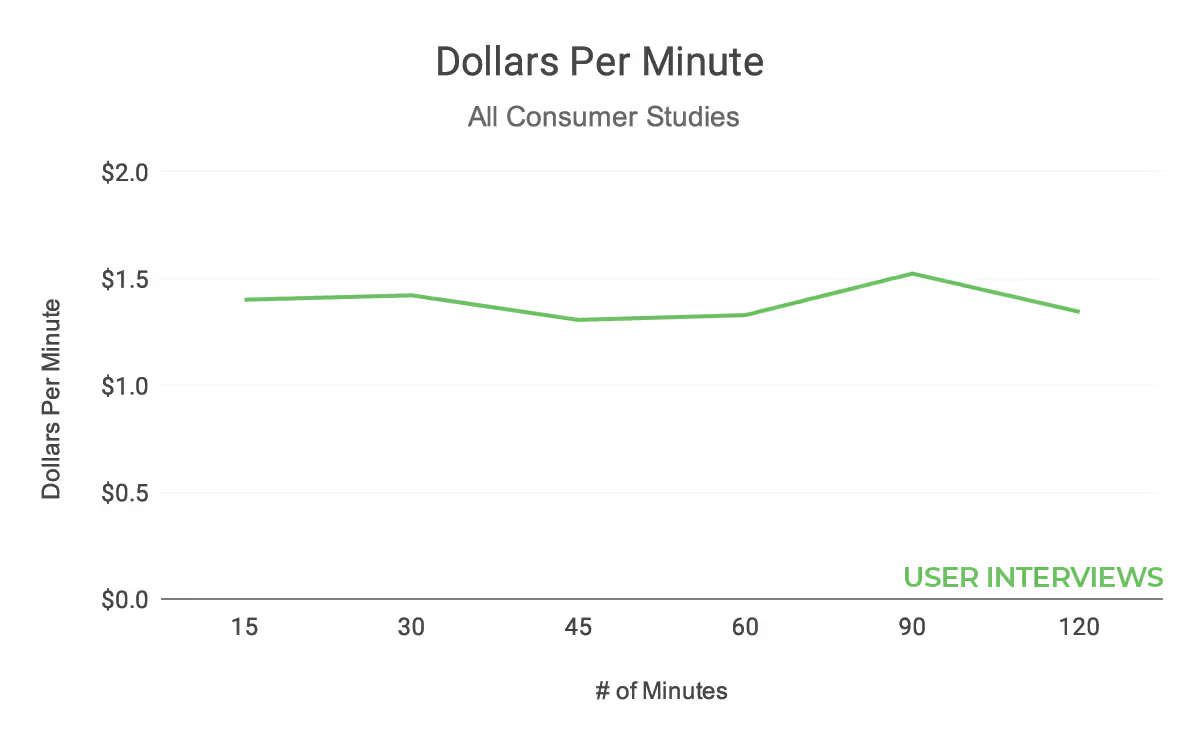

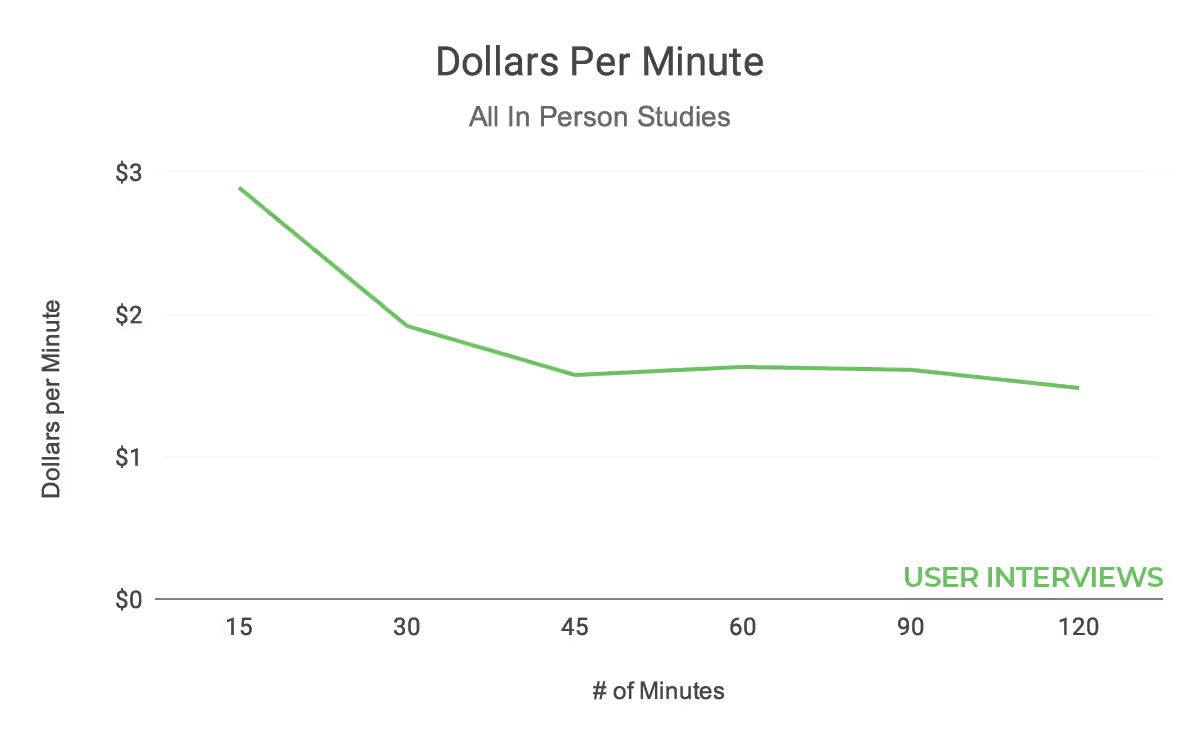

Short Studies Versus Long Studies

We also wanted to zoom out a little more, to see how the length of a study would impact the incentive you need to offer your participants. When studies are shorter, we hypothesized that our hourly rate approach would not be as applicable. As expected, researchers pay more per minute for shorter studies, which is especially pronounced for professionals and in-person studies. Think of it as a sort of base fee to get someone to show up for your study, and to commute to your study in the case of in-person, with a lower per-minute fee after that initial base fee.

The minimum amount typically offered for remote studies is lower, which makes sense considering there’s not as much extra effort involved in participating in a remote study. For an in-person study, a participant may have to drive to another location or prepare for the researcher to come to them. In a remote study, all a participant needs to do is open their laptop or turn on their phone, which makes it easier to participate from anywhere at any time.

For a remote study, the average incentive paid for a 15-minute session is $1.54/minute, or $23.10. Based on this, we’d recommend the lowest rate offered for a 15-minute, remote study is $20.

So, if you’re considering launching a short study, think twice about making it an in-person session. If you can bypass spending extra money on incentives and research space, without losing any of the integrity of your research, it’s a pretty clear win.

The average incentive for a 30-minute remote study is $1.66/minute, or $49.80/session. After 30 minutes, remote sessions evened out at $1.34/minute.

The average incentive paid for an in-person 15-minute session is $2.88/minute, or $43.20/hour. This makes sense considering the participant will spend much more than 15 minutes preparing for and/or commuting to and from the session. Based on this, we’d recommend that the lowest rate offered for a 15-minute in-person study is $40.

30 minutes seems to be the threshold, after which the per-minute rate evens out for both in-person and remote studies. On average, the incentive paid for a 30-minute in-person session is $1.92/minute, or $57.60/session. After 30 minutes, in-person sessions evened out at $1.57/minute.

We also looked at the average dollars per minute for professional versus consumer studies. For consumers, the average incentive for a 15-minute session was $1.40/minute, or $21/session.

The consumers did not have the same steep threshold that professionals did. They showed a pretty even rate, averaging $1.39/minute, no matter how long the study was, with a minor uptick around 90 minutes.

Unsurprisingly, the professionals had a higher minimum incentive for participation. For 15-minute sessions, professionals earned $2.19/minute, or $32.85/session.

Professionals, however, had a threshold of 30 minutes, commanding $2.58/minute, or $77.40 on average for a 30-minute session. Their average for sessions between 45 and 90 minutes was $1.83/minute.

Unmoderated Incentives

We’ve spent most of this report digging into moderated research incentives, but many research studies are unmoderated. Researchers often need to employ both qualitative and quantitative methods to find the answers they’re looking for. In our State of User Research Report, we found that researchers employed a variety of methods, many of which are unmoderated.

This graph shows the percentage of researchers among our survey respondents who conducted each type of research at least once a month. Of the types of research listed, things like surveys, unmoderated usability testing, card sorts, first click tests, diary studies, and tree tests can all be unmoderated.

The vast majority of our unmoderated sessions within this sample were targeted at consumers, rather than professionals. 85% of the unmoderated sessions in our sample were targeted at consumers, while only 15% were targeted at professionals. Since our set of unmoderated data was also considerably smaller than our set of moderated data—it only made up 12% of our total sampled sessions—we thought it would be best to look at our unmoderated testing data as a whole, instead of breaking it out into consumer and professional sets.

Most Common Incentives

Without further ado, let’s get to the data. We’ll start with the percentage of completed sessions for the most popular incentive amounts. Since there’s a huge range in what an unmoderated study can entail, we see a bigger spread of commonly offered incentives. The majority of incentives, 89%, paid between $40 and $100/hour.

There were 4 most commonly paid incentives. $40/hour is the most common incentive, at 29%. The second most commonly paid incentive, accounting for 20% of completed sessions, was double that, at $80/hour. Third and fourth places were very close together. Coming in third was $60/hour, accounting for 17% of completed sessions. Fourth was not far behind, it was $100/hour, accounting for 16% of sessions.

Best Incentives for a High Fill Rate

Since we saw four distinct spikes, which combined accounted for 81% of all completed sessions, we’ll zero in on those numbers for the fill and no show rate analysis. The incentive with the most completed sessions, $40/hour, had an average fill rate of 72%. Incentives of $80/hour had the highest average fill rate, at 81%. $60/hour showed an average fill rate of 75%, and $100/hour had an average fill rate of 70%.

Best Incentives for a Low No Show Rate

To settle which incentive we recommend for unmoderated research studies, we’ll have to look at the no show rates as well. For an unmoderated study, no show means something a bit different. It could mean a number of things, but most often it means that the participant was approved and said they completed the task but did not, or did not finish the task on time.

Our most common incentive rate, $40/hour, also had the lowest no show rate, at 7%. Studies that offered $60/hour averaged a no show rate of 15%, $80/hour averaged a no show rate of 10%, and $100/hour averaged a no show rate of 16%.

Our Recommendation for Unmoderated Research

Based on the combination of the percentage of completed sessions, fill rate, and no show rate, we recommend $40/hour for unmoderated studies. Again, this is meant to be a jumping-off point, and since unmoderated studies can really run the gamut in terms of what they require, talk over which incentive is best with your team. There’s a big difference in participant commitment between a 2-week diary study and a 5-minute first-click test, beyond the obvious time difference.

Bonus: Alternative Incentives

Is your audience less motivated by cash-like incentives? Try something else, like in-product bonuses, swag, or a charitable donation. This can be most useful for high-level occupational targeting and for your own customers. On a recent episode of our podcast, Product Discovery Coach Teresa Torres said,

I worked with a company, Snag a Job, they're a job board for hourly workers. They offered $20 for a 20-minute interview. So when you went to their home page, they popped up an interstitial. It just said, "Hey, do you have 20 minutes for us? We'll pay you $20." Now what's nice about that offer is 20 minutes is a small amount of time. And for an hourly worker who's likely making minimum wage, $20 for 20 minutes is a high value.

If you're Salesforce, and people work in your product all day every day, this type of recruiting will also work. But, $20 probably isn't going to cut it. In fact, I think for enterprise clients, cash is rarely the right reward, so you have to look at: What's something valuable that you can offer? And it could be anything from inviting them to an invite-only webinar. It could be giving them a discount on their subscription for a month. It could be giving them access to a premium helpline. You've got to just think about: What is outsized value for the ask that you're asking for.

The most important thing about your incentives is that they are valuable to your customers. Your incentive should be something that makes your customers excited about participating in your research, and that’s not always cash. Consider offering them specific in-product benefits, behind the scenes access, or awesome swag. There’s a whole community of people dedicated to buying, selling, and trading Mailchimp monkeys, don’t underestimate the power of good swag.

Moral of the story? Get creative with your incentives, although in many cases cash is the right move, it’s not the end-all-be-all of research incentives.

TL;DR

Just scrolled to the end to get the bottom line? I see you, I feel you. To sum it all up, we pulled a sample of 25,000+ sessions completed on the User Interviews platform, which you can see on the next page.

If your budget is tight, think about whether or not your research can be done remotely. It allows you to spend less on incentives, office setups, and parking validation. Plus, running great remote research can be easier than you think.

You’ll also need to consider how long your study is when choosing your incentive since there’s a minimum you’ll need to offer to get participants through the door. We also found the average incentive offered for different study lengths to help you choose what incentive is best for you and your team.

The incentives in this report are the averages of the 25,000+ sessions included in our sample, and they’re meant more as baselines to get you started, not as hard and fast rules to live by. Though many participants want cash or cash-like (think Amazon gift cards) incentives, some may respond better to an alternative incentive. Be creative with what you offer—extra features in-product, skip-the-line support, or just really awesome swag—whatever it is, make sure it’s something your participant finds valuable.

One last closing thought: be fair with the incentive you offer to participants. They’re offering their time, their experience, and their thoughts, and that’s valuable stuff. If it wasn’t, why would we be doing research in the first place? Fairly rewarded participants = happy, honest, excited participants. Plus, offering the right incentive the first time means you’ll waste less time sitting around waiting for no shows and recruiting people to take their place.

Related Reading

.avif)