“How satisfied are you with the layout of this site?”

“How likely are you to recommend our product to every single one of your friends?”

“How many clicks did it take you to find what you were looking for? Be exact.”

I see questions like this daily just trying to go about my normal internet business. And I hate them so much I’ve installed multiple ad-blockers to avoid them. But that doesn’t mean they don’t come to my email, slide past the ad-blockers, or present themselves in banner-like ads. Unfortunately, I’m not the only one being bombarded by surveys—it’s a universal problem.

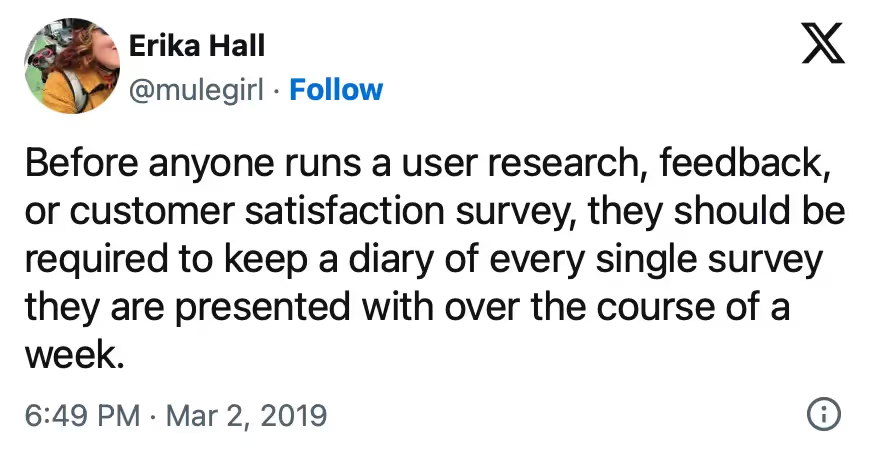

It’s no secret that Erika Hall, a user research and design powerhouse, is not a huge fan of how surveys have taken over our lives.

But it wasn’t always this way. The rise of free survey tools and the ease of distributing surveys has seemingly made everyone a survey-holic. Not only does this cause users to be so bombarded with surveys they stop replying to any at all, it doesn’t actually help teams answer their questions. We chatted with Erika about how surveys got so bad, and why they’re not actually helping most people build better experiences.

Listen to the episode

Click the embedded player below to listen to the audio recording. Go to our podcast website for full episode details.

About our guest

Erika Hall is the co-founder of Mule Design and the author of Just Enough Research. She loves design, getting to the bottom of things, and well-designed research.

The problem with surveys

Erika isn’t against surveys. She’s against poorly done surveys, which is most of what bombards you online. In the podcast, she says “surveys are the most dangerous and misused of all potential research tools or methods in the realm of doing things online...surveys are really overused, badly used, and people should mostly just stop.”

Since surveys are now so easy to conduct, all of the expertise that goes along with creating a well crafted survey and accurately interpreting the results seems to have gone out the window. Anyone and everyone is creating surveys to answer any questions they may have about their product or service.

How we got here

A long long time ago, in a land before high-speed internet was widely available, conducting a survey was difficult to do. You had to find someone who specialized in research to design and distribute your survey. Then they had to call, send mail to, or stop people in the street to get your survey questions answered. After all that, the results had to be manually gathered, quantified, and analyzed.

Now, running a survey is as simple as creating one on Google Forms (or any other free survey tool) and distributing it via email, social media, or however else you connect with your audience. It’s easy to do for free or on the cheap, and you can get results almost immediately. They’re accessible. Why not use them?

How bad surveys are born

Not all surveys are bad surveys, but many are. But bad surveys are just a symptom of a larger problem with how many companies approach research. Erika outlines a few of the reasons people would rather run a poorly designed survey than invest in other kinds of research that may be better suited to answering the question at hand.

“Data” > answers

Surveys provide decision-makers and teams with statistics to base future decisions on. For example “75% of users love pop-up surveys,” sounds great and all, but how accurate is that statement? You can’t possibly have actually surveyed 100% of your users to get to that number, so it’s just 75% of whoever participated in the survey. If you surveyed them through a pop-up survey, your numbers are probably disproportionately in your favor too. So basing a decision off of that statistic probably isn’t the best way to make a good decision.

Method > reason

This is a problem pervasive throughout research, though it is especially relevant in the case of surveys. Instead of starting by asking, “What do we want to learn?” teams will begin their research by saying, “We’re going to run a survey, what should we ask?”

Good research starts with a good research question, one that is specific, actionable, and practical. This means you’re taking cues from what you want to learn, not what kind of research method you chose to use. In the example above, you could have probably learned more about user’s feelings around pop-up surveys by conducting a usability test or simply looking at your analytics—our bounce rate went up and 75% close out the survey immediately—or a combination.

Ease > effort

Again and again, people choose to conduct surveys because they are “easier” to execute. In reality, Erika says, creating a good survey is a lot like application design. It requires a lot of thought, expertise, and even some testing before you release it, if you want to do it well. You need to know what questions you need to answer and how to ask those questions. Since you can’t just ask people what you want to know, this is an art that takes time to master.

It’s easy to ignore the effort that goes into really good surveys, since it’s so easy to create and distribute them. Surveys also come with the added benefit of failing silently. Since you don’t always have a lot of context about the people who take your surveys, you won’t know how accurate the answers you received were.

On a higher level, surveys just aren’t perceived to be as risky as other methods of research. The results come back in hard, cold, numbers that everyone can see. This makes them a great prop for backing up professional decisions. Plus, they’re less emotionally taxing, as you don’t ever have to actually interact with other humans. As Erika said on the pod, humans are just terrified of other humans. Surveys feel safe.

How we can do better

It’s not all doom and gloom though. Surveys can still be useful research tools, when they’re executed properly. Short, in-context surveys targeted at specific groups of people can be really helpful. Surveys created by people who are literally professionals at creating surveys can provide amazing insights. When asked if she had any “best practices” tips for survey beginners, Erika said they should just not do them. Very often, you’d be better to answer your question with, well, a user interview :)

The oversaturation of surveys often comes from a good place, a craving to learn more about users, but they miss the mark in efficacy.

💫 Continuous user feedback surveys can help inform future UX research studies, improve product designs, and speed up iteration cycles. Explore best practices, tools, and examples for effective surveys in the UX Research Field Guide.

![Erika Hall on Why Surveys [Almost Always] Suck](https://cdn.prod.website-files.com/59b1667dd2e65000019d07be/657b419222e863b26aef6f64_AS-Blog-Header-light.jpg)

.avif)