Want to recruit high-quality participants? Offer them high-quality incentives.

To be clear, ‘high-quality’ doesn’t always equate to ‘the most expensive’—but when it comes to recruiting the best participants for your study, incentives definitely matter.

In fact, research incentives matter so much that we’ve written an entire chapter about them in the UX Research Field Guide. (If you’re new to the concept and process of distributing incentives, we highly recommend giving that chapter a read.)

But whether you’re new to research or an ol’ pro, it’s easy to get tripped up by the question: “How much should I offer participants for this study?”

🧮 Check out the User Research Incentive Calculator, which is based on data from nearly 20,000 completed research projects recruited and filled via User Interviews.

In the following report, we take a deep dive into the data behind that calculator, to help understand which incentives are most likely to motivate the right people for different types of studies.

The data in report will help you choose the best incentive for your research recruiting needs, whether they are:

- In-person or remote studies

- Remote or unmoderated

- With professional or consumer participants

- 1:1 interviews, focus groups, or surveys

- Shorter or longer sessions

Note: This is our second report on research incentives—the first, The Ultimate Guide to User Research Incentives, was published in 2019, along with V.1 of our User Research Incentives Calculator.

📌 Think you can skip out on incentives? Think again. Here's why you have to pay your participants for UX research (and how to do so even on a shoestring budget).

Our recommendations at a glance

First, we set recommendations for incentives based on what type of participants you need to talk to (consumer/B2C or professional/B2B), where your sessions will take place (remotely or in person), whether your sessions are moderated or unmoderated, and—if moderated—what kind of study you’re recruiting for (interviews, focus groups, or multi-day/longitudinal studies).

Then, we looked at the effect that session length had on the incentive amount. We hypothesized (and did indeed find) that the same per-minute rate may not apply to shorter studies, and that a minimum incentive amount was key to getting a participant in the door.

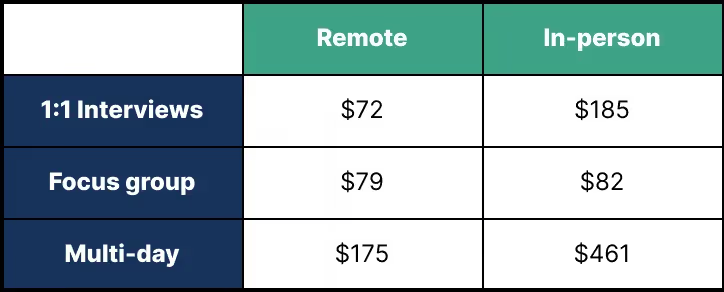

Optimal incentives for moderated studies

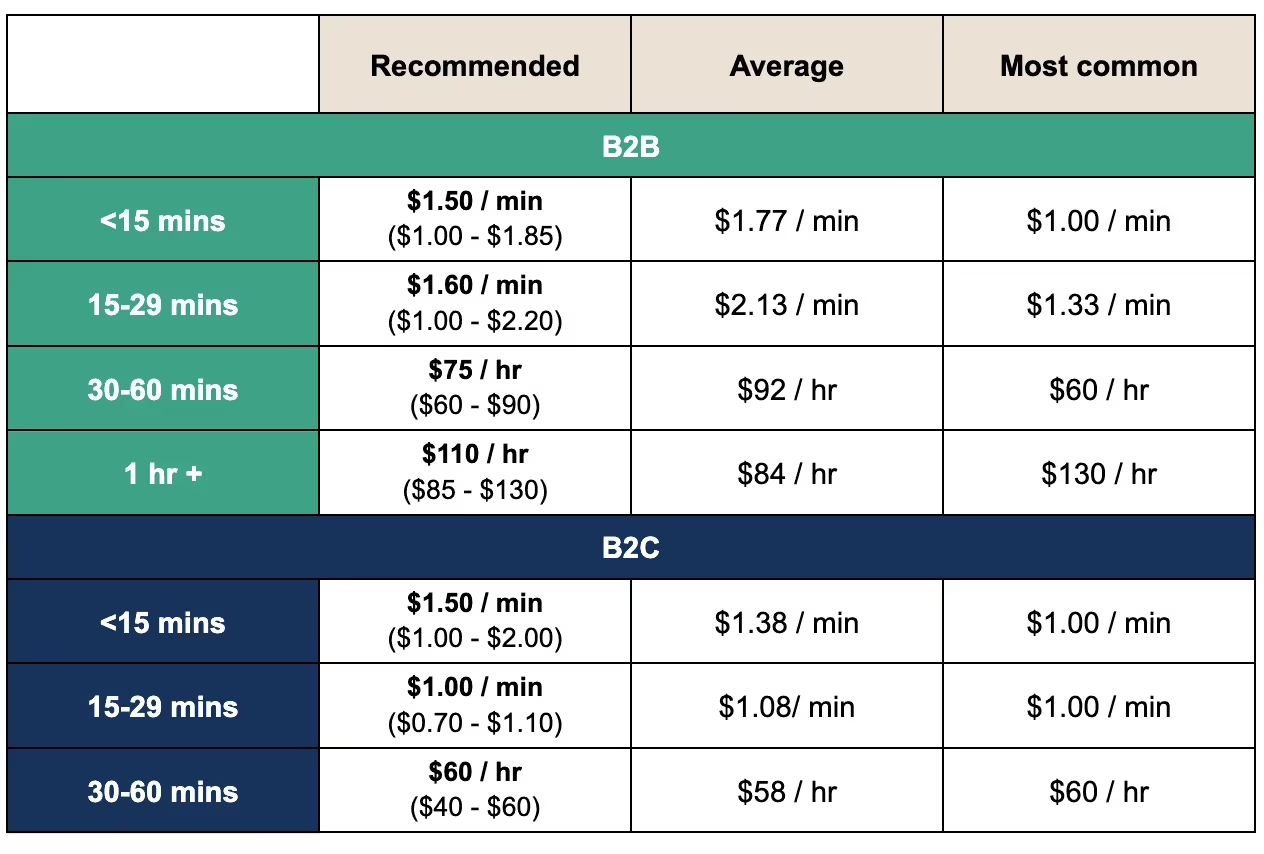

Optimal incentives for unmoderated studies

🌍 About the study

In our analysis, we found that incentives had a big impact on how many requested sessions were ultimately matched and completed—up to a point.

There’s a threshold you need to provide to get people in the door, but after that, we’ve established a per-hour rate that makes it easy to find a good baseline incentive, which you can adjust to fit your needs.

Our recommendations are meant to be starting points for a larger conversation about which incentives reap the best results. There are factors that the data in this report won’t account for, such as:

- How difficult your exact participants are to find

- How meaningful cash or cash-like incentives are to them

- How much time you’re asking them to commit overall

- The nature of your study

For example, asking users to come to your lab and participate in road-testing for a self-driving car is very different from asking them to join you on a Zoom call for a generative interview.

We’ve created this report and the Incentive Calculator to help make setting the right incentive amount easier. If you're still having trouble recruiting enough participants for studies, consider experimenting with different incentive options to find the one that resonates best with your audience.

🔬 Methodology

With help from our Analytics team, we analyzed a sample of nearly 20,000 completed projects recruited and filled with User Interviews within the last year.

Variables and segmentation

For each project, we looked at the following data points:

- Incentive amount (actual and rounded to the nearest multiple of 10)

- Number of participants requested by the researcher

- Number of qualified participants successfully matched to the project

- Number of no-show participants

We also applied the following filters to the data, which we used to analyze different segments:

- Audience: Consumer (B2C) or professional (B2B)

- Moderation: Moderated or unmoderated

- Remote status: Remote or in-person

- Study type: Interviews, focus groups, multi-day studies, unmoderated tasks

- Session length in minutes

For each segment (e.g. B2B, remote, moderated, interviews, <30 minutes) we calculated the following:

- Average incentive amount

- Most common incentive amount (rounded to nearest multiple of 10)

- Q:R rate (# of qualified participants found / # requested for study)

- No-show rate (the % of confirmed participants who never showed up)

How we came up with our recommendations

We then synthesized this data to come up with our incentive recommendations for different study types. Since every study is unique, we have given an optimal range (e.g. $90 - $200/hr) for each segment, as well as a single value that represents the midway point of that range (e.g. $145).

To do this, we looked at the average, most common, and second-most common incentive rates for each segment. The smallest number of the three became the minimum “optimal” value, while the largest became the maximum “optimal” value in our range. (We rounded these numbers to the nearest multiple of 5.)

Then, we averaged those two values to find the midway point for anyone looking to be smack dab in the middle of the market.

When the number of projects in a given segment was too small to rely on the average and most common numbers, we calculated our recommendations to the best of our ability.

For example, we did not have enough data on B2C in-person interviews <30 minutes, so we applied the % difference between B2C in-person and remote incentives more generally to the known values for B2C remote interviews <30 minutes to calculate our recommendations. (Although we highly recommend that for sessions <30 minutes, you should consider conducting your study remotely—there’s a reason we don’t have much data on short, in-person interviews in 2022!)

📚 Definitions

To make sure we’re all on the same page, let’s take a moment to define the terms we’ll be using in this report. Namely:

- Incentive

- Ratio of qualified participants (Q:R)

- No-show rate

- Completed sessions

- Optimal range

Incentive

An incentive is a reward that you offer to participants in exchange for taking part in your research. Incentives can be anything, from a cool hat to cold hard cash.

They encourage people to apply to your study, help you recruit a wider (or, in some cases, more targeted) pool of participants, and thank participants for their time.

This report is focused on cash and cash-like incentives (like gift cards), since they are the most commonly offered types of incentives according to the State of User Research Report. (Plus, they’re easiest to quantify.)

Ratio of qualified participants (Q:R)

Ratio of qualified participants (Q:R) is a term we use here at User Interviews to talk about the number of qualified participants (people who fit the criteria of a particular study) who applied, relative to the number of participants requested by the researcher.

For example: If a researcher needed to recruit 10 participants and 20 qualified participants were found, the ratio of qualified participants would be 2:1. On the other hand, if a researcher requested to recruit 10 participants and only 5 qualified participants were found, the ratio of qualified participants would be 1:2.

The higher the ratio of qualified participants, the better.

No-show rate

The no-show rate is a measure of how many people were confirmed for a session, but did not show up for that session.

A low no-show rate is, of course, a good thing.

Completed sessions

Completed sessions are research sessions that were scheduled, held, and finished successfully. In other words, completed sessions are the building blocks for larger, closed research projects.

Optimal range

The optimal incentive range represents the minimum and maximum amounts among the following three values (rounded to the nearest multiple of 5): average, most common, and second most common incentive amount.

🧮 Variables

We will also be discussing the following variables (also, the inputs for the UX Research Incentives Calculator):

- B2C (consumer) vs B2B (professional)

- Remote vs. in-person

- Moderated vs. unmoderated

- Study type

- Session duration

B2C (consumer) vs B2B (professional)

Many people think of B2C and B2B as different types of companies, but at User Interviews, we use the two terms to refer to different types of participants.

When we say B2C or consumer, we’re talking about participants who were requested on our platform without any job title targeting. Of course, consumer participants often have jobs, and may also be recruited as professionals in other studies.

For example, consumer participants might include people who:

- Are between the ages of 25–35

- Are the primary shoppers for their households

- Have recently purchased a specific kind of lawn mower

- Have kids between the ages of 6–8

- Live in towns of less than 30,000 people

In the example above, many of the participants are likely employed, but the nature of their work is not relevant to the study at hand (or at least, is not part of the screening criteria).

Professional (B2B) participants, on the other hand, are people that have been recruited through User Interviews with targeting based on their job titles and skills. These folks are more difficult to match, and often expect more compensation for their time.

For example, professional participants might include people who are:

- Landscape architects

- Cardiologists

- VPs of Marketing

- Uber drivers

- Product developers

These are all professionals, not to be confused with “professional participants” who try to participate in studies as a primary source of income.

Remote vs. in-person

A remote study is any study that doesn’t require the participant and the researcher to be in the same physical location. The session can be held over video chat, a phone call, or a recorded submission.

An in-person study is a study which requires the participant and the researcher to be in the same physical location. For example, if you ask participants to come to your office for an interview, host an in-person focus group, or conduct ethnographic research in the field, those would all be considered in-person studies.

Moderated vs. unmoderated

Moderated sessions are those in which a researcher, designer, product manager, or other moderator observes—either in-person or remotely—as the participant takes part in the study.

Generative interviews, focus groups, moderated usability tests, and field studies are all examples of moderated research activities. Moderated studies often produce qualitative data.

Unmoderated sessions are sessions that don’t have a moderator present. The participant completes the activity on their own, without live observation by a researcher or moderator.

Surveys, unmoderated usability tests, tree tests, diary studies, and first click tests are all examples of unmoderated tasks. These studies often produce quantitative data.

Study type

In this report, we refer to four different types of research studies:

Interview

1:1 interviews are conversations with a single participant, in which a researcher asks questions about a topic of interest to gain a deeper understanding of participants’ their attitudes, beliefs, desires and experiences.

Note: We’ve used the term “interviews” since those are by far the most common type of 1:1 moderated studies that we see; however, this bucket may also include moderated usability testing with an interview component, card sorts, etc.

Focus group

Focus groups are moderated conversations with a group of 5 to 10 participants in which a moderator asks the group a set of questions about a particular topic.

Note: As with “interviews”, in this context a “focus group” is an umbrella term for any moderated research session with multiple participants, and could include things like co-design workshops as well.

Unmoderated task

Unmoderated tasks are completed by participants (independently, and often at their own pace) without the real-time observation of a researcher or moderator.

There is no distinction in this report between remote and in-person unmoderated studies (the latter being rare and not adequately reflected in our dataset). Unmoderated tasks have been considered remote by default.

Multi-day

Multi-day studies are studies that span the course of days, weeks, or even months— such as diary studies or long-term field studies.

Session duration

Session duration refers to the length of time required for each research session.

In this report, we sorted duration times into 5 buckets, depending on study type and sample size:

- Less than 15 minutes

- 15–29 minutes

- 30–60 minutes

- 1 hour or more

- Multi-day

🤓 Our findings

Below we evaluate remote, in-person, moderated, unmoderated, consumer, and professional study incentives across market range, qualified-to-requested participants ratios, and no-show rate dimensions.

Get your popcorn. Let’s dive in!

Research incentives for short vs long studies

First, a word on session duration:

We wanted to see how the length of a study would impact the incentive you need to offer your participants. We hypothesized that when studies are shorter, our hourly rate approach would not be as applicable.

And indeed, researchers pay more per minute for shorter studies, especially for professionals and in-person studies.

Minimum incentive amounts for short vs. long B2B studies

The minimum amount typically offered for remote studies is lower, which makes sense considering there’s not as much extra effort involved in participating in a remote study. For an in-person study, a participant may have to drive to another location or prepare for the researcher to come to them. In a remote study, all a participant needs to do is open their laptop or turn on their phone.

The average incentive paid for a remote session under 30 minutes is $70. After the first hour, remote studies paid $50–$60. Incentives for in-person studies are slightly higher (at $70 for B2C and $80 for B2B), which makes sense considering the participant will spend much more than 15 minutes preparing for and/or commuting to and from the session.

Minimum incentive amounts for short vs. long B2C studies

If you’re considering launching a short study, think twice about holding it in person. If you can bypass spending extra money on incentives and research space, without losing any of the integrity of your research, it’s a pretty clear win.

Moderated B2C research incentives

Average incentive amounts for moderated B2C studies

We looked at moderated projects recruited and filled through the User Interviews platform to see what the average incentives were for completed studies.

In Fig. 3, you can see the average incentive amount for moderated B2C studies, which varies by both study type and location (remote vs. in-person). Researchers offer a higher incentive for in-person studies, since these require a higher time commitment than remote studies do.

Average incentives for remote moderated B2C studies

Most common incentive amounts for moderated B2C studies

In Fig. 4, you can see the most common incentive amounts offered for moderated B2C studies. These fall at much lower levels than the average amounts—but just because they’re the most commonly offered doesn’t always make them the best incentive for recruiting and completing a study with the right participants.

(We’ll take a closer look at which incentives attracted the most qualified participants in the next section.)

Most common incentives for remote moderated B2C studies

Best incentives for a high ratio of qualified participants

We also measured incentive success based on the ratio of qualified participants who applied to the study to the number of participants requested by the researcher (Q:R), to see which incentive would help you recruit for your study most reliably.

For remote, B2C moderated studies (Fig. 5), the highest ratio was shown at $120/hour, at a 5.6 Q:R. This means that for every 1 participant that the researcher requested, 5.6 qualified participants applied for the study. When we look at our three most commonly paid incentives, $60, $80, and $100/hour, we can see that the ratio is slightly lower, ranging from roughly 4–4.5 Q:R.

Ratio of qualified participants by incentive (B2C moderated, remote)

Comparing remote Q:R to in-person Q:R (Fig. 5a), you can see that the Q:R for remote studies is generally higher across the board.

Although incentive amount does have an impact on Q:R, the higher Q:Rs here is likely due to the fact that remote studies generally require less of a time and effort commitment from participants, making them easier to fill regardless of incentive amount.

Ratio of qualified participants by incentive (B2C moderated, in-person)

In the chart above, you can see that the highest ratio of qualified to requested participants (Q:R) falls at 3.1, with an incentive of $20 for in-person studies.

Zooming in on the most commonly offered incentives—$70, $80, and $100—we can see that the Q:R hovers around 1–1.5 Q:R.

Again, note that the Q:R for remote studies is much higher than the Q:R for in-person studies, since remote studies are typically easier to participate in (and therefore, easier to recruit for).

Next, let’s look at the Q:R for multi-day, remote studies. For remote multi-day studies, the highest Q:R was 11.8 for an incentive of $220, but this was still a relatively small sample size with only 5 studies, so take this number with a grain of salt.

Ratio of qualified participants (B2C moderated, multi-day, remote)

Let’s take a look at the most commonly offered incentives for remote multi-day studies—$100, $150, and $250. For these, the Q:R was much lower, ranging between 4–5 Q:R. Still, that’s a healthy Q:R, and again likely reflects the relative ease of filling remote studies vs. in-person.

Ratio of qualified participants (B2C moderated, multi-day, in-person)

In Fig. 6a, you can see the Q:R ratio for multi-day in-person studies. The highest Q:R here is 22.7 for a $200 incentive, but we’d consider that an outlier with only 1 study reflecting that ratio. Instead, we chose the next highest Q:R as being the most reliable for an accurate incentive recommendation with a higher sample size, a 2.7 Q:R for an incentive of $250.

Best incentives for a low no-show rate (B2C moderated)

Studies with very low incentive amounts tend to have the highest no-show rates, and no-show rates generally decline as incentives increase.

In the charts below, we can see that the lowest no-show rate for remote studies (1%) appears at $160/hour, while the lowest no-show rate for in-person studies (0%) is slightly higher at $200/hour.

No-show rates by incentive amount (B2C, moderated, remote)

In Fig. 7a, we marked the no-show rate of 0% for a $170 incentive, but this number has a slightly smaller sample size, so we’d consider the $200 incentive to be a stronger recommendation.

No-show rates by incentive amount (B2C, moderated, in-person)

We also see the no show rate decline as the incentives get higher. For studies offering $60/hour, the average no show rate was 10%. When the incentive was $100/hour, the average no show rate was roughly 8%. When it was $150/hour, the no show rate was less than 5%.

These no-show rates are still quite high—just another clue that filling in-person studies can be tricky!

In Fig. 8, you can see the no-show rates for multi-day remote studies. The lowest no-show rate in this figure is 2.2%, for an incentive amount of $400. We chose this data point as being more significant due to the higher sample size, although there are lower no-show rates (0%) for other incentive amounts with smaller sample sizes.

No-show rates by incentive amount (multi-day, moderated, remote)

Recommended incentives for moderated consumer research

We’ve looked at the average incentives and most common incentive amounts for moderated B2C research, as well as those that lead to the highest ratio of qualified participants and the lowest no-shows.

Incentives for moderated B2C studies

When we combine the three cuts of the data—market range, averages, most common—you can see how incentives rise or fall based on the level of time and effort required by the participant.

Based on the numbers we looked at, here are our incentive recommendations for moderated B2C studies by study type:

- 1:1 interviews: $80/hr (remote) or $90/hr (in-person)

- Focus groups: $50/hr (remote) or $85/hr (in-person)

- Multi-day: $240/study (remote) or $355/study (in-person)

Moderated B2B research incentives

If you’re recruiting by job title or industry, you may need to shell out a higher incentive to get the right people in the door.

When we talk about professional (or B2B) participants in this report, we’re referring to participants who are being recruited specifically for their professional skills. For example, if you need to talk to Forklift Drivers, you’d need to find professional participants. The same goes for CEOs, Nurse Practitioners, Supply Chain Managers, Content Writers, etc.

Because being a professional can mean so many different things, it’s important to consider the day-to-day of the professionals you’re recruiting. What kind of incentive would mean something to them? Do they have a lunch break to talk to you? Do you need to see them in action?

Again, the recommendations listed here are meant to be jumping-off points for a larger conversation about what kind of incentive would mean enough to your participants that they would drop what they’re doing to talk to you.

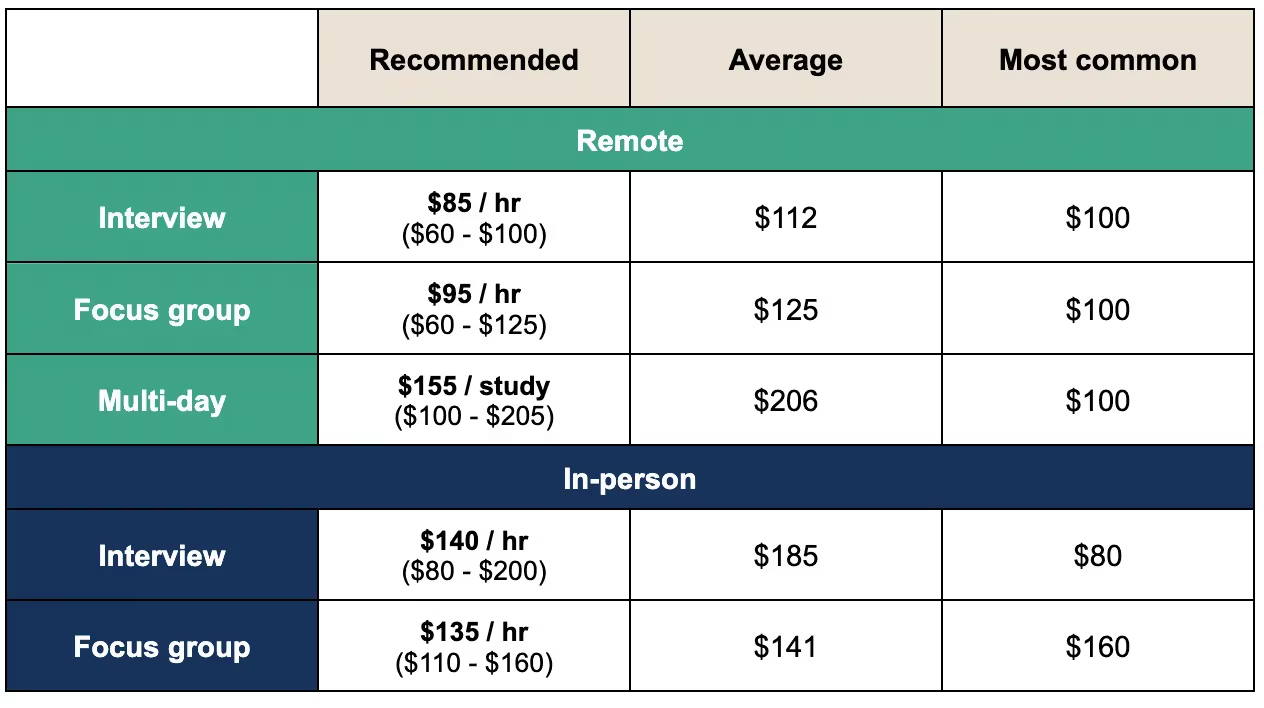

Average incentive amounts for moderated B2B studies

In Fig. 10, you can see the average incentives for moderated B2B studies. For remote studies, the average falls at $100 across study types. For in-person studies, the average falls at $80 for 1-1 interviews, $160 for focus groups, and $200 for multi-day studies.

Average incentives for moderated B2B studies

Most common incentive amounts for moderated B2B studies

The most common incentives offered for moderated B2B studies tend to skew higher than the average, at $112 for 1:1 remote interviews, $126 for remote focus groups, and $206 for remote multi-day studies.

For in-person studies, the most common incentives are $185 for 1:1 interviews, $141 for focus groups, and $200 for multi-day studies.

Most common incentives for moderated B2B studies

Best incentives for a high ratio of qualified participants

In Fig. 12, you can see the Q:R (ratio of qualified applicants to requested participants) for B2B moderated remote studies. There were 2 incentive amounts ($110, $180) that resulted in a high Q:R of 3 (meaning that for every one participant requested by the researcher, 3 qualified participants were found), although the former had a higher sample size, so we’d consider that to be a more reliable data point.

Ratio of qualified participants by incentive (B2B, moderated, remote)

Comparing this to in-person studies in Fig. 12a, you can see that the highest Q:R is 1.9 for an incentive amount of $90. However, the sample size here is quite small (4 studies), so it’s also worth considering the next-highest Q:R of 1.0 for an incentive amount of $200, because it has a much higher sample size.

A Q:R of 1.0 isn’t exceptional—for every 1 participant requested by the researcher, 1 qualified participant was found, which doesn’t give you a ton of wiggle room for no-shows—but it does successfully fill the study.

Ratio of qualified participants by incentive (B2B, moderated, in-person)

In Figs. 13 and 13a, we look at the Q:R for B2B multi-day studies, split up by remote vs. in-person studies. For remote studies, the highest Q:R is 3.8 for an incentive amount of $100, which means that for every 1 participant requested by the researcher, 3.8 qualified participants were found and applied to the study.

Ratio of qualified participants by incentive (B2B, multi-day, remote)

We also looked at the Q:R for in-person studies, but this sample size was quite small (only 2 studies), so we recommend taking this data with a grain of salt.

Ratio of qualified participants by incentive (B2B, multi-day, in-person)

Best incentives for a low no-show rate

In Fig. 14, we look at no-show rates for B2B moderated remote studies. The lowest no-show rate (1.9%) came with an incentive amount of $140.

No-show rates by incentive amount (B2B, moderated, remote)

For in-person studies (Fig. 14a), the lowest no-show rate (0.0%) with the highest sample size comes at a $100 incentive.

No-show rates by incentive amount (B2B, moderated, in person)

No-show rates by incentive amount (B2B multi-day)

Recommended incentives for moderated B2B research

Based on these numbers, here our incentive recommendations for moderated B2B studies by study type:

- 1:1 interviews: $85/hr (remote) or $140/hr (in-person)

- Focus groups: $95/hr (remote) or $135/hr (in-person)

- Multi-day: $155/study (remote) or $375/study (in-person)

Incentives for moderated B2B studies

Looking for a single benchmark? We recommend a baseline incentive of $100/hour for professional remote studies. It had, by far, the most completed projects and the lowest no-show rates.

Unmoderated research incentives

Researchers often need to employ both qualitative and quantitative methods to find the answers they’re looking for.

Average incentive amounts for unmoderated studies

In Fig. 17, you can see the average incentive amounts offered for unmoderated studies.

Incentives for B2B studies were slightly higher, ranging from $80 to $100 per hour (or $1.30–$1.60/min) depending on the study length. B2C studies, on the other hand, ranged from $50 to $80 per hour (or $0.83–$1.30/min) based on study length.

Average incentives for unmoderated studies

Most common incentive amounts for unmoderated studies

Take a look at the most common incentives for unmoderated studies in Fig 18. Again, B2B studies tend to offer higher incentives, ranging from $60 to $100, while B2C studies fall at a steady baseline of $60 regardless of study length.

Most common incentives for unmoderated studies

Best incentives for a high ratio of qualified participants

Next, we looked at Q:R (the ratio of qualified to requested participants) for B2C and B2B unmoderated studies.

As you can see in Fig. 19, the highest Q:R (excluding an outlier of 1 study) for B2C unmoderated studies is 3.7 for an incentive amount of $20, meaning that for every 1 participant that the researcher requested, 3.7 qualified participants were found.

Ratio of qualified participants by incentive amount (B2C, unmoderated)

In Fig. 20, you can see that the highest Q:R of 2.6 is shared between $30 and $50 incentives. Because the $50 incentive has a much higher sample size, this is probably a more reliable incentive amount for recruiting and filling a study.

For both B2C and B2B studies, the most commonly offered incentive of $60 had a slightly smaller Q:R, but this incentive still quite reliably netted at least 2 qualified per requested participant.

Ratio of qualified participants by incentive amount (B2B, unmoderated)

Best incentives for a low no-show rate (unmoderated)

For an unmoderated study, a “no-show” could mean a number of things. Just as with moderated sessions, a “no-show” can be someone who was approved for a study and never participated, or it could mean that a participant said they completed the task when they didn’t, or they may not not have finished a task on time.

Lowest no-show rates by incentive amount (B2C, unmoderated)

For B2C studies, the lowest no-show rate fell at 8.2% for an incentive of $160 per hour (or $2.60/min), while the most commonly offered incentive ($60). For B2B studies, the lowest no-show rate was 2.3% for $110 per hour (or $1.80/min). For both B2B and B2C studies, the most common incentive amount ($60 per hour / $1 per minute) had a much higher no-show rate of nearly 30%.

Lowest no-show rate by incentive amount (B2B, unmoderated)

Recommended incentives for unmoderated research

We’ve looked at the average and most common incentives for unmoderated research, as well as the incentives which result in the highest number of qualified applicants and the lowest no-show rates.

Average and most common incentives for unmoderated studies

Based on these numbers, here are our recommendations for unmoderated B2C studies by study length:

- <15 mins: $1.50/min

- 15–29 mins: $140/min

- 30–60 mins: $135/hr

- 1 hr+: $375/study

And here are our recommendations for unmoderated B2B studies by study length:

- <15 mins: $1.50/min

- 15–29 mins: $85/min

- 30–60 mins: $95/hr

- 1 hr+: $155/study

🎁 Alternatives to cash incentives

Is your audience less motivated by cash-like incentives?

You might need to try something else, as Product Discovery Coach Teresa Torres explained on our podcast:

"I think for enterprise clients, cash is rarely the right reward, so you have to look at: What's something valuable that you can offer? And it could be anything from inviting them to an invite-only webinar. It could be giving them a discount on their subscription for a month. It could be giving them access to a premium helpline. You've got to just think about: What is outsized value for the ask that you're asking for?"

The right incentive should be something that gets your customers excited about participating in your research—and that’s not always cash. Often, alternative incentives will be most useful for high-level occupational targeting and for your own customers.

Consider offering them:

- Specific in-product benefits

- Behind the scenes access

- Charitable donations

- Awesome swag

Moral of the story? Get creative. Although in many cases cash is the right move, it’s not the end-all-be-all of research incentives.

⚙️ How to automate incentive distribution

Still distributing incentives manually? Free up your time, save yourself some effort, and avoid the logistical headache of fulfilling incentives at scale by automating incentive distribution.

With User Interviews, you can manage participant payments yourself—or you can let us handle it. If you choose the latter, we will automatically send incentives to participants after you mark their sessions as “completed” within the platform.

From there, the participant will receive a link where they can redeem their reward in the form of dozens of digital gift cards, available in multiple currencies.

Gift card options vary by country, but in the U.S., participants can choose from options like Amazon, Target, Whole Foods, Starbucks, Uber, Southwest Airlines, and more. Participants can even split their incentive balance between multiple gift cards, giving them greater flexibility in how they receive their reward!

Currently, these incentives are available in:

- US dollars (USD)

- Canadian dollars (CAD)

- Australian dollars (AUD)

- British pound sterlings (GBP)

- Euros (EUR)

We automatically convert the payment so participants receive their incentive in the correct amount relative to the currency they select.

⭐️ In summary

Just scrolled to the end to get the bottom line? We see you, we feel you. Here’s the TL;DR…

We pulled a sample of nearly 20k successfully completed projects launched with User Interviews in the past year. Here’s the rundown of what we learned:

Here are our recommendations for moderated and unmoderated studies one more time:

Optimal incentives for moderated studies

Optimal incentives for unmoderated studies

Whatever incentive type you choose to offer, try to be fair. Participants are offering their time, their experience, and their thoughts, and that’s valuable stuff. If it wasn’t, why would we be doing research in the first place?

Fairly rewarded participants = happy, honest, excited participants. Plus, offering the right incentive the first time means you’ll waste less time sitting around waiting for no shows and recruiting people to take their place.