Note to the reader:

This part of the field guide comes from our 2019 version of the UX Research Field Guide. Updated content for this chapter is coming soon!

Want to know when it's released?

Subscribe to our newsletter!

Erika Hall, co-founder of Mule Design, describes surveys as “the most dangerous research method.”

Why? Because although surveys are one of the easiest research methods to conduct, they’re also one of the easiest methods to mess up.

A survey is a set of questions you use to collect data from your target audience. In the words of survey expert Caroline Jarrett, a survey is:

“A process of asking questions that are answered by a sample of a defined group of people to get numbers that you can use to make decisions.”

Surveys are appealing because they’re cost effective while having the potential to reach large numbers of people quickly and easily. You can ask almost anything, and the results are easy to tally.

However, these are the exact same reasons why surveys are so dangerous; surveys are deceptively simple. Although they’re relatively easy and inexpensive to implement, you can’t let this ease fool you into cutting corners. Getting valid and valuable data from a survey still requires strict adherence to best practices.

What’s the difference between a survey, a questionnaire, and a poll? The terms ‘survey’ and ‘poll’ can be used interchangeably, while a questionnaire refers to the content of the survey/poll you’re conducting:

In UX research, surveys are typically used as an evaluative research method, but they can also be used in generative research and as a continuous research method. In-product surveys, for example, are an easy and effective way to automate ongoing data collection.

There is a variety of approaches you can take to designing and implementing a survey. Here’s an overview of the different types of survey methods in UX research.

Quantitative surveys collect a large number of responses to questions that can be answered using checkboxes or radio buttons. These types of surveys are designed to answer “how many” questions; like all quantitative research methods, these types of surveys are meant to deliver statistically meaningful results representative of the broader population.

Quantitative survey types include descriptive and causal:

Qualitative surveys use open-ended, exploratory questions to collect more detailed comments, feedback, and suggestions. While these types of responses cannot be as quickly and easily tallied as the data from quantitative surveys, the insights they provide can be very valuable. Researchers often run a qualitative survey with a small group to gain a deeper understanding of the respondents and identify the best questions and answers to include in a quantitative survey aimed at a larger group.

Cross-sectional surveys look at a specific, isolated situation and solicit a single set of responses from a small population sample over a short period of time.The aim is to get quick answers to a standalone question. This method is purely observational, and does not measure causation.

Longitudinal surveys collect a series of responses from a larger group of participants over a longer period of time (from weeks to decades). This observational method helps researchers study change over time, and includes three types of studies: trend, cohort, and panel. These long-term studies are most successful when designed around short, frequent sessions, instead of lengthy interviews that can be burdensome for participants.

Retrospective surveys combine longitudinal and cross-sectional elements by looking at change over a long period of time via a one-time survey. This approach helps reduce the time and money needed to perform the survey, but can introduce accuracy issues depending on participants’ memory recall.

Ultimately, choosing the right research method for you will depend on your goals and the questions you’re hoping to answer.

Surveys are a popular and advantageous research method for many reasons:

As Erika Hall explains,

“It is too easy to run a survey. That is why surveys are so dangerous. They are so easy to create and so easy to distribute, and the results are so easy to tally. And our poor human brains are such that information that is easier for us to process and comprehend feels more true. This is our cognitive bias. This ease makes survey results feel true and valid, no matter how false and misleading. And that ease is hard to argue with.

It’s much much harder to write a good survey than to conduct good qualitative user research. Given a decently representative research participant, you could sit down, shut up, turn on the recorder, and get good data just by letting them talk. … But if you write bad survey questions, you get bad data at scale with no chance of recovery.”

That’s why it’s critical you take the time to create a thorough and effective user research plan when designing a survey.

It can be tempting to use surveys for all kinds of quantitative research, but that’s not always the best use of the method. While surveys are undeniably a relatively fast, unmoderated, and low-cost way to compile a lot of data on a lot of people, there are many ways that data can be skewed to deliver biased or otherwise compromised results.

A better use of a survey is as a qualitative tool that helps you explore and define the area of investigation. Using open-ended survey questions as a starting point can help you gain a much deeper understanding about the subject at hand and the end users you’re surveying. And that understanding is invaluable for mitigating the risk of designing subpar solutions as well as increasing your ability to design better products more efficiently.

Thorough and thoughtful planning and preparation are crucial to survey success. You need to be clear and explicit about your goals. One of the most common mistakes new researchers make is to cast a wide net, asking for responses to a wide range of questions, and then try to reverse-engineer a central goal from the resulting data.

A strong survey explores a very specific and clearly defined research question via well-written, bias-free survey questions and an optimized survey flow.

The research question is different from the individual questions that you eventually include in your questionnaire. A research question defines the topic you are exploring. It is the ‘big idea’ at the heart of your survey.

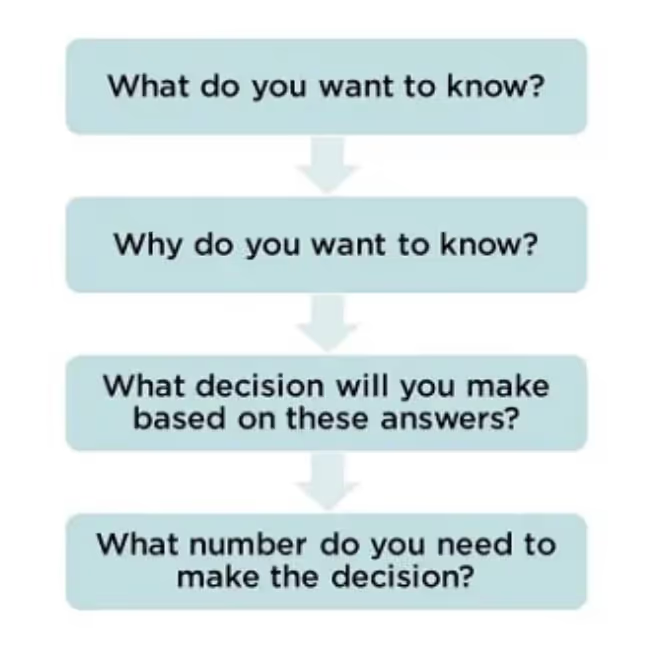

Identifying your research question typically starts with brainstorming to develop a list of all the possible suggestions. From there, you refine the question to its essence. Caroline Jarret does this by running each possible question through a series of four challenges:

As you run your various questions through this 4-part challenge, you’re looking for your Most Crucial Question (MCQ), which Caroline defines as, “the one that makes a difference. It’s the one that will provide essential data for decision-making.” She suggests stating your question in two parts:

And don’t stop there. Before you settle on your MCQ, you want to really pick it apart by questioning what you mean by each word. You want to wind up with something that is incredibly clear and doesn’t require any interpretation. For more info on how to “attack” your MCQ in this way, check out this article by Effortmark.

Remember, in order for your research to make a meaningful impact, it needs to be designed to enable decisions for your company, product, or service. Read more about a framework for doing decision-driven research.

Clarity and specificity are the two most important characteristics of effective survey questions. With that in mind, here are our top tips for creating questions that help you get the answers you need:

Mixing up the types of questions on your survey can help you collect the most insightful data. There are two main types of questions: open and closed.

Open-ended survey questions allow participants to respond in their own words. This type of question typically generates a great deal more qualitative detail and can uncover unexpected responses. Open-ended questions help you get at the “why” behind people’s answers. However, it can be difficult and time-consuming to analyze and draw conclusions from open-ended responses.

Closed survey questions have pre-populated response options that participants choose using tools like checkboxes or radio buttons. Closed survey questions have higher response rates since they require less effort from the respondent. Because the response options are standardized, closed questions deliver data that is easier to analyze and which provides statistically significant findings.

There are several different types of response options for closed survey questions:

It is very easy to accidentally insert bias into your survey questions. And it’s also possible for participants to be biased in their responses. We already mentioned the Hawthorne effect, which causes people to change their behavior when they know they are being observed. There is also acquiescence or agreement bias, which causes survey respondents to agree with research statements in an effort to be more agreeable or to give the answer they think you want.

Here are a few different kinds of bias that can sneak into your questions:

Here are a few examples of poorly-worded survey questions and how to fix them from Miro:

The ‘right’ questions here are all better because they’re more open-ended and they don’t make assumptions about the respondent’s experience. Instead of leading respondents to the answer you’re looking for, let them lead you to the details and experiences that actually matter to them.

Since it’s impossible to survey every person who currently uses or may someday use your product, survey research relies on the statistical concept of sampling to select and survey certain individuals from a broader target population.

There are two main types of sampling:

In addition to determining the type of sampling most appropriate for your research, you also need to identify the right number of participants.

The right number of participants for you will depend on a number of factors, including the scope of your research, the type of study you’re running, and the available resources like budget and time. As a general rule, NN/g recommends 5–10 participants for qualitative studies and at least 40 participants for quantitative studies.

Your approach to recruitment will depend on the type of survey you’re running.

There are many sources that can help you find large numbers of respondents to participate in broad surveys designed to deliver quantitative data. Online survey tools such as SurveyMonkey and Pollfish, for example, offer such panels. It is also often possible to source respondents by sharing your survey link in a relevant group on Facebook, LinkedIn, Slack, or other social platform.

If you are looking for more targeted, qualitative responses from a more closely defined type of respondent, User Interviews can help you achieve the best results. Our Recruit tool allows you to source from a pool of more than 700,000 participants tailored to your exact specifications, and our Hub tool allows you to build and manage your own in-house panel.

Your survey results won’t be useful if you fail to survey the right people. Screener surveys help ensure that you’re recruiting high-quality participants who can provide the information and insights you need to make relevant decisions.

Here are a few quick tips for building effective screener surveys:

Analyzing and synthesizing your data is the final step in survey research. For larger surveys—more than 100 respondents—it makes sense to do quantitative analysis using mathematical and statistical methods. In addition to looking at which responses were most popular, you should also look for patterns in how different types of users answered various questions.

If your survey includes qualitative, open-ended questions, your review of the results will involve sentiment analysis. This approach looks at whether a response is positive or negative as well as looking at the specifics of the response.

To effectively summarize sentiments so you can identify action steps, organize your results based on each question rather than each user. By grouping all the responses to each question together, you’ll easily be able to identify sentiment themes. You can then associate those themes with specific UX opportunities.

There are lots of different survey tools available, many of which we have captured in our UX Research Tools Map.

Here are just a few of the most popular survey tools:

Surveys are an extremely flexible research method and particularly effective as part of hybrid and mixed methods approach to research.

For instance, you can combine a quantitative survey with qualitative interviews. Or you could use a structured survey to complement observational field work. You can also use surveys to compare participants’ self-reported data to their actual behavior. The possibilities are nearly endless.

And there you have it—the “most dangerous research method,” made safer and simpler with intentional survey design and best practices.

Schedule your demo and see us in action today.