Note to the reader:

This part of the field guide comes from our 2019 version of the UX Research Field Guide. Updated content for this chapter is coming soon!

Want to know when it's released?

Subscribe to our newsletter!

Aristotle said:

“We are what we repeatedly do. Excellence, then, is not an act but a habit.”

Likewise, excellence in UX research is not achieved with a single study, but with habitual acts of research. Of course, one-off studies are still incredibly important, and we’re not suggesting you drop them in favor of continuous research. Instead, combining one-off studies with continuous research can take your UXR practice to a whole new level.

When you make it a habit to interview customers on a weekly, biweekly, or monthly basis—whatever cadence works best for you—you can build your research muscle and use fresh, relevant customer insights to drive your decisions.

Continuous user interviews are frequent 1-1 meetings with customers for the purpose of collecting ongoing insights. Unlike user interviews conducted as part of a dedicated study, continuous user interviews are quicker, recurrent, and open-ended in nature. They’re an important part of the continuous UX research framework and, more broadly, the continuous delivery framework used by agile product teams.

Specifically, continuous user interviews fall into the ‘continuous exploration’ stage of the continuous delivery process, as demonstrated in this graphic by Scaled Agile, Inc:

Product Discovery Coach Teresa Torres describes continuous research as like “putting money in a bank”—in other words, recurring interviews allow you to collect insights, like coins, which can have a compounding effect over time.

Continuous interviews can take many different forms, including:

When you’re deciding what continuous interviews should look like at your company, focus on your goals. Every organization is unique when it comes to how their team is structured and what they're hoping to accomplish with continuous discovery, so it may or may not be the right approach for you.

For example, if you’d like to generally create more opportunities for your team to interface with customers and boost empathy across the org, you might lean toward broad or team-based interviews. If you’d like to simplify recruiting for one-off studies, you can build an advisory board to have the infrastructure in place when you need to access a pool of participants.

Or, you might choose to implement some combination of the different types to encourage diverse and recurring conversations with customers throughout the organization (in addition to project-based studies for deeper analysis).

If it weren’t obvious from our name, we’re big fans of user interviews. But adding continuous user interviews into the mix? Even better.

Here are some of the benefits and opportunities associated with continuous interviews.

Continuous user interviews allow you to discover opportunities, pain points, risks, and other customer feedback that can lead to new ideas or product iterations (or stop a bad product decision in its tracks).

As Laura Carroll, Sr. Design Manager, says in her article on implementing continuous user research:

“[Continuous research—as opposed to a traditional study approach—] creates a deeper understanding of users by creating more space for open dialogue than a study focused on a specific feature or problem area would.”

Most product teams have a long list of “wishlist” items that they’d like to work on.

The trouble is, it can be difficult to know for certain which projects are worth working on, and when to tackle them. When you’re regularly checking in with customers, you’ll likely hear them bring up specific goals or pain points that help you decide what to work on next.

Product Discover Coach and Founder of Product Talk Teresa Torres describes this benefit in her blog on continuous interviewing:

“When a product team develops a weekly habit of customer interviews, they don’t just get the benefit of interviewing more often, they also start rapid prototyping and experimenting more often. They do a better job of connecting what they are learning from their research activities with the product decisions they are making.”

Last but not least, continuous interviewing helps foster an appreciation for—and habitual use of—research throughout your organization.

With regular user check-ins, you can create a healthy culture of curiosity and openness among your team. Research becomes a need-to-have practice of its own, independent of confirmation bias or the urge to just “check boxes.”

User Researcher Gregg Bernstein joined the Awkward Silences podcast to talk about healthy research cultures:

“A sign of an unhealthy research practice is where research is pigeonholed into: ‘we're only going to use research to test or validate something that's already been decided.’”

In other words, continuous research broadens your teams’ conceptualization of research, from a step that’s only taken when specific questions arise to a process that can spark questions no one would’ve thought to ask otherwise.

You might be thinking, “the more research, the better, right?”

Not necessarily. As you conduct user interviews on a more frequent basis, you may run into some growing pains.

Although we’re a big proponent of continuous user interviews, we also understand that they come with some challenges and risks. These challenges can be mitigated with careful planning and effective tools, but it’s important to be aware of (and plan for) them ahead of time.

The main drawbacks of continuous user interviews are:

Continuous user research reduces the risk involved in product development by grounding all of your decisions in tangible user insights.

When Teresa Torres, product discovery coach and founder of Product Talk, joined the Awkward Silences podcast, she discussed the history of user research and how it’s evolved to include continuous research:

“We've seen over the last 15, 20 years, a lot of growth in user experience design and user research. But a lot of that growth has been fueled by a project mindset… so there's this idea that research is a phase that happens at the beginning….

I think what we're finding as we move towards more of a continuous improvement mindset, both on the delivery and the discovery side, is that there's always questions that could benefit from customer feedback.”

In other words, research has shifted from one-off projects that answer specific research questions to an ongoing practice that reminds us that users will always be the best advocates for themselves. Teams are often surprised, disappointed, or taken off guard by the insights they learn from routine interviews, and these insights lead products and businesses in unexpected (yet valuable) directions.

Oyster’s Lead UX Researcher Dr. Maria Panagiotidi says in her how-to guide to introducing continuous research habits:

“Continuous research is a form of proactive research—we constantly schedule user interviews without having a specific project. When we don’t have a focused project, the sessions can be used to help us discover user pain points and uncover opportunity areas. In cases we have specific questions, continuous research allows us to speed up recruitment!”

Alas, continuous research and project-based research inform and accelerate each other.

Speaking of recruitment….

Recruitment is consistently—across projects, teams, and industries—one of the biggest hurdles researchers face.

As Teresa Torres says on the Awkward Silences podcast:

“I think the biggest [objection] I hear is, I'm not allowed to talk to customers. I don't know how to find customers. Takes three weeks to recruit customers. I really think the biggest barrier to continuous interviewing is getting somebody to talk to on a regular basis.”

So how do you manage this challenge? Teresa says the very first thing to do is automate your recruiting process.

📚 If recruitment is a major bottleneck for you, we’ve created an entire guide on how to break this bottleneck, speed up research cycles, and improve the impact of UXR. We recommend downloading a copy of the guide to have on hand for recruitment best practices, but here are a few tips for recruiting and managing an internal participant panel in the meantime:

Before you can start reaching out to customers to sign up for your panel, you need to make sure that all of your technical boxes are checked.

To effectively build and manage an internal participant panel, you’ll need:

… as well as any other technical requirements specific to your company, such as a means for collecting signatures for NDAs.

Who’s in charge of this thing?

Even if multiple teams across your company intend to recruit participants from your internal panel, it still helps to assign a dedicated panel manager for oversight and quality control.

As Jeanette Fuccella, Director of Research & Insights at Pendo.io and former Principal User Experience Researcher at LexisNexis, says in her article about managing an internal user research panel:

“By all measures the panel has been a huge success, but we would never have been able to achieve this success without one key critical component: our panel manager.”

Typically, panel management would fall under the umbrella of research operations, and include responsibilities like:

… in addition to the other core functions of a Research Ops practice:

However, if your organization doesn’t currently have a dedicated ReOps function, you can assign one of your user researchers as the panel owner. Just be mindful of how many teams and projects they’re supporting at any given time—recruitment and panel management are big jobs, so it’s not reasonable to expect a lone researcher to handle them for large, scaling teams.

As with any study, you’ll want to be intentional about the type of participants you recruit to be on your panel. To get the most accurate insights, the users on your panel should be a diverse and varied group of people, reflective of your actual user base.

Consider criteria like:

Additionally, customers who actively sign up to participate on a user panel might have different characteristics—for example, greater extroversion, stronger opinions, or more engagement with your product—than those who decline your invite.

To get the best results, be sure to balance insights from your panel interviews with other types of research, such as A/B testing or NPS surveys.

Incentives are a great way to encourage panel sign-ups and thank participants for their time.

But before you start doling out cash, you should think about:

Once you have the logistics, criteria, and incentives plan nailed down, you can start recruiting customers.

Some options for soliciting panel sign-ups include:

Okay, so you’ve built your panel. Now what?

Here’s how to design and conduct user interviews as a habitual practice in the continuous research framework:

Let’s dive deeper into each step.

One of the most common objections to starting a continuous user interview practice (and, really, one of the most common excuses for not doing anything in life) is: “I don’t have time.”

Here’s why that excuse is bunk: Continuous interviews don’t have to be very long.

As we recommend in our Continuous User Interviews Launch Kit, one 30-minute session per week is ideal—but even just 5 minute check-ins every week can work, if that’s all you can manage.

Continuous interviews are all about building the habit, so choose a length of time you can realistically keep up with.

As far as the cadence of interviews goes, Teresa Torres, product discovery coach and founder of Product Talk, recommends once a week.

If you’re not there yet, challenge yourself to reduce the cycle time between interviews throughout the year. For example, if you’re doing 1/quarter, try for 1/month, then if you’re doing 1/month, try for 1/week, and so on.

“I think the number of people you talk to is the wrong metric…. If you have a glaring usability problem, and the first person you interview helps identify that, well, you don't really need to talk to another person…. Other things are more complex, and you might need to talk to more people. But I think this metric of reducing the cycle time between customer touchpoints encourages the right behavior of: ‘How do we just talk to customers as frequently as possible?’”

Whenever it works for you (and the participant). We recommend blocking off an hour or so each week to dedicate to interview sessions.

Outside of these regular interviews, you can schedule interviews at key milestones in the customer lifecycle to learn more about the specific experience of those milestones. Other opportunities for regular user interviews include:

In general, it’s better to involve multiple teams—not just the UX research team—in the interview process, for two reasons:

Of course, you don’t want to invite a dozen people to each interview—how overwhelming that would be for the participant! The key is to strike a balance between strategically sharing interviews among teams and allowing each team to conduct interviews independently.

GitLab, for example, uses a continuous interview framework in which product managers lead interviews, while allowing other team members to jump in to observe:

“Continuous interviews are open to all GitLab team members. The PM should notify their team Slack channel about upcoming interviews. All the team members and stable counterparts within a group are encouraged to take part in the interviews from time to time, so they can have first-hand experience listening to customer problems.”

You’re probably familiar with interview guides for project-based studies. The interview guide for a continuous practice can be more concise, general, and open-ended.

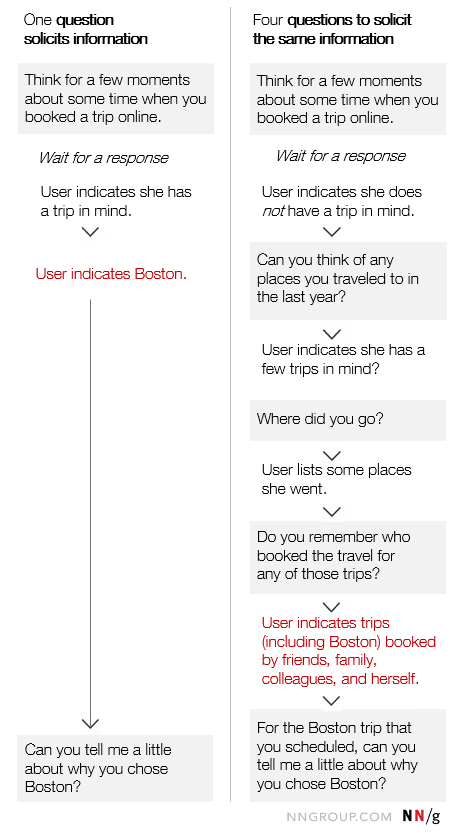

In fact, Teresa Torres says that just one question can do the trick for continuous interviews:

“You might have some warm up questions, just to build rapport. But the meat of your interview is what I think can be driven by one question. And it's a ‘tell me about a time when’ question.”

Why does “tell me about a time when…” work? Because it elicits a story from the participant, and stories are more likely to give you insight into the participant’s decision-making process and mental models.

However, it’s probably a good idea to plan out additional questions in case the first question doesn’t elicit the detailed response you hoped for. Here’s an example of how two different participants might answer the same question, and how to probe for more information when necessary:

Additionally, it’s helpful to ask questions about the past, not the future. This tip comes from Digital Product & Technology Transformation Leader Michaela Heigl in her article on continuous interviewing:

“Instead of asking ‘Would you pay for this (in the future)’ ask ‘Have you ever paid for something like this? How long? Why? What did you do before? Are you still using this service? If not, what made you stop?’

Asking about the solutions users found in response to a problem is more valuable than having to speculate about any action they may take in the future.”

By understanding how users behaved and made decisions in the past, it’ll be easier for you to predict what they might do in the future.

No-shows are a bummer. And, unfortunately, you can’t avoid them completely.

However, you can take steps to reduce no-shows by making it as simple as possible for participants to schedule, remember, and show up to their sessions.

Here are a few tips, drawn from Oyster’s Lead UX Researcher Dr. Maria Panagiotidi’s article on continuous user interviews:

All of these tips can be easily implemented with Research Hub, the #1 recruitment and panel management solution on the market. With Hub, approved participants can schedule sessions from a calendar with your pre-set availability. You can also configure branding and communication defaults for automated emails to ensure consistency and professionalism as you remind participants of upcoming sessions.

Moderating interviews can be nerve-wracking, even for seasoned pros. If we could sum up what it means to be a great moderator for continuous user interviews in only 3 words, they would be:

Part of being open, active, and empathetic means giving the participant the floor—and avoiding controlling or re-directing the conversation too much. As Teresa Torres describes on Awkward Silences:

“The key is you never want to interrupt your interview participant. And even if what they're telling you is totally irrelevant, they're telling you it because they care about it. And if they care about it, you need to care about it.”

Just because you’ve asked all the questions in your interview guide doesn’t mean your work is done.

Before the interview concludes, ask the participant whether or not you can get in touch with them in the future. This will give you the opportunity to ask follow-up questions if they arise, address any complaints they brought up during the interview, or update them when you’ve released features that they specifically asked for.

Ferdinand Goetzen, CEO and Co-Founder of Reveall, explains two reasons why it’s important to let customers know when you’ve addressed a specific complaint:

“Number one is that the customer is happy because you fixed the problem, but number two, they start becoming less strict with other problems that might arise. What we find is that when you're very open with the customer, and if a customer really gets a feeling of, ‘Hey, I flagged this problem and it got fixed…’ They also cut you more slack on any potential future [issues or requests], be that usability problems, be that a bug.”

In other words, the simple step of following up with customers lets them know you’re listening, builds their trust, and improves customer relationships in the future.

Continuous interviews aren’t just a chance for you to chit-chat with your customers—they’re also an opportunity for the rest of your team to learn, as well. For them to do so, you need to effectively analyze and share the insights you discover from your interviews.

However, one thing to keep in mind is that the feedback you receive from continuous interviewing might be a bit more scattered than the feedback you collect from other, more focused research methods. The way you organize, distill, and share your insights will make a big difference in how and whether they’re used.

Some quick tips to get you started are:

Let’s go into detail on each.

Because you’re interviewing continuously, you won’t have a set “end” date for your interviews, signaling the time to start compiling and organizing data.

Instead, you need to do ongoing synthesis, ideally as soon as possible after each interview ends. According to Teresa Torres, one great way to do this is with short, one-page interview snapshots which provide an overview of what you learned:

“A lot of the teams that I work with, some of them literally print these [snapshots] out and put them in a binder in their workspace, so that when they have a question that comes up, they can flip through all their past interview snapshots and look at: How often are we hearing this? What are the interviews we need to go back and revisit? Who do we want to talk to, to learn more?”

These snapshots allow you to quickly summarize what you learned in individual talks with customers and provide an easy jumping-off point for doing a more comprehensive analysis of your vast archive of interview data later on.

Keeping your participants’ Personal Identifiable Information (PII) confidential is a good practice demonstrating respect for their safety and privacy—and it becomes an essential practice when your NDAs or consent forms promise that you’ll do so.

Before you share or archive any of the insights from your interviews, be sure to remove all PII from the files you intend to share.

For example, here’s a quick step-by-step guide to hiding the names of research participants in Zoom. This is just one step in ensuring the safe, ethical handling of participants’ data, but it’s a great one to get started with.

Interview snapshots, recordings, transcripts, and other data should be stored somewhere secure, yet accessible to others on the team.

Typically, this storage requires you to create some sort of insights repository, or a centralized database for all of your research. If you don’t already have one, taking the time to set one up might be the single most helpful operational task you can do.

For smaller teams, this can take the form of a simple spreadsheet or an interactive table in tools like Airtable, Notion, or Trello. But as your team and research practice scale, you’ll definitely want to switch to a more robust repository tool like EnjoyHQ or Dovetail.

When you’ve created snapshots or other types of summaries that highlight important information from each interview, share them with the rest of your team! The more people who know about, consume, use, and share your insights report, the greater the impact they’ll make.

If you can, try to tailor and contextualize your share-outs to each team you’re sharing them with. For example, engineers are likely to be interested in specific product-related feedback, and executives will be more likely to engage with short, easily-digestible deliverables.

In short, the same tools you might use for regular, one-off user interviews. These include:

If you’re doing other types of continuous research, you could also mix and match tools to combine insights. For example, you could use Research Hub for building and managing your participant panel for user interviews, then follow up with NPS surveys at certain activation moments using tools like Sprig or Appcues.

You can easily automate all study logistics—including posting calls for recruitment, pre-screening applicants, scheduling sessions, tracking participant activity, and distributing incentives—with a comprehensive panel management tool like User Interviews's Research Hub.

Visit our pricing page to learn more about plans for every team.

As we mentioned earlier, continuous user interviews shouldn’t replace one-off studies or other types of research.

In addition to your weekly (or monthly, or quarterly—but for ambition’s sake, let’s call them weekly) user interviews, use other qualitative and quantitative approaches to maximize insights from different audiences.

Schedule your demo and see us in action today.