Marie Prokopets has an advantage many founders don’t: experience and know-how in assessing markets and finding big opportunities.

Her unconventional path to becoming a founder included writing about emerging business trends, strategy consulting for Fortune 500 companies, and M&A in a myriad of industries.

These combined experiences gave her a headstart when she and serial entrepreneur Hiten Shah (who started Crazy Egg and KISSmetrics) worked together to found FYI. They also run Product Habits, a free product development-focused newsletter.

The value proposition behind FYI is simple: the platform organizes information from all of your document creation, storage and sharing apps (think G Suite, Dropbox, Slack, etc.) in one, user-friendly location.

FYI has had a great initial response and success for an early stage software product: over 1400 upvotes on Product Hunt, a Golden Kitty award in 2018, a guest appearance on the Product Hunt podcast, and lots of Twitter mentions from early adopters and tech-famous folks (including this review from Naveen Selvadurai, the founder of Foursquare) suggesting they may have either nailed product-market fit early or at least found a potentially huge problem to solve.

But it took two failed endeavors and a host of research to reach the successful product they have today. Marie shared the tactical differences in doing customer research that separate success from failure: what questions to ask, what methods get the most value, and what to do when an MVP doesn’t resonate with customers.

In this post, we’ll learn about…

- How Marie and Hiten successfully pivoted from a failed product to FYI

- Why you should focus more on customer stories

- Which beta testing questions give the most insightful takeaways

- The subtle phrasing mistakes you can make that bias your results

- How she knows when it’s time for product launch

Let’s start by digging into why her first two projects failed, and how she used those learnings to set FYI up for success.

Note: Looking for users to validate your approach? User Interviews offers a complete platform for finding and managing user research participants. Tell us who you want, and we’ll get them on your calendar. Sign up for free. You’ll only be charged for people who actually take part in your study.

Success from Failure: How FYI Was Born from Beta Testing a Now-Shelved Product

Marie and Hiten’s first collaboration was Dogo, a tool that lets users send and receive feedback on pitch decks. People could add comments directly to the draft, then send it back for changes. Once people sent it to investors, they could track who opened the pitch deck and the period of time they looked at it.

They didn’t do any real research beyond reflecting on their own experience to validate the idea. And it didn’t take long before the duo realized that their target market — entrepreneurs looking for funding — comprised an uncomfortably small market. And the pain point solved was just too small.

Still hoping to do something with documents, they pivoted to a new product in 2017: Draftsend. It lets you leave audio feedback on PDFs and see how long your colleagues spent looking at the document.

“With Draftsend, we did do research, but it was so flawed. We took what we learned on Dogo and then we said to ourselves, ‘Oh, audio is a big trend. People want audio, too.’ So when we did customer interviews, we’d ask questions like, ‘Hey, check out this idea, what do you think?’ And that obviously resulted in hugely biased results.”

It’s worth repeating: you’ll get skewed results by asking questions like, “What do you think of this idea?” People want to reaffirm what you’re doing. But just because they think the idea is cool doesn’t mean it’s something they’ll put down money for, nor does it mean that there’s enough of a market for your solution.

Draftsend generated a lot of excitement on Product Hunt’s upcoming product list. But Marie and Hiten immediately discovered that retention would be a problem. People weren’t regularly creating new content that they wanted to add audio to and the product required more effort than people had time for. “We realized we hadn't done the proper research on Draftsend. We haven't seen if there's actually a problem here to solve. We were just building something because we had a gut feeling,” she said.

Their solution was to open up Draftsend for early access (their preferred term for beta testing). Their survey for early access users got 700 responses. And the pain point the majority identified was not related to leaving feedback on and adding audio to presentations. It wasn’t even related to presentations or PDFs at all. The real problem they could solve for users? Finding and sharing their documents quickly and easily, across all the document tools they use.

They followed up and confirmed their learnings from the survey with 52 customer development interviews, far more than many startups bother to do. The insights they gained were what they needed to release FYI just six months after Draftsend.

What Made the Draftsend Early Access So Helpful

So how do you get from Draftsend to FYI? The key involved asking the right question in the right way. Rather than asking leading questions and encouraging biased feedback, they kept the survey questions open-ended and more general than before.

The survey Marie distributed included basic information gathering questions about the respondent’s job role, company size, and so forth. They also asked which apps their customers used for creating and sharing documents.

But the main question that led them to launch FYI was this one:

What’s your single biggest challenge when it comes to creating and sharing documents?

The overwhelming winner was difficulty finding docs across multiple apps. Marie then included one more question to encourage future help:

How can you participate in helping us build this software?

That’s how they found 52 people to interview. It also offered future opportunities for user research.

Note: Struggling to find enough people to talk to? Tell us who you want, and we’ll get them on your calendar. Sign up for free. You’ll only be charged for participants who actually take part in your study.

When Beta Testing Products, Start with a Stripped Down MVP & Competitor Analysis

After Marie and Hiten did the survey and conducted the interviews, they made an assumption that they wanted to test. The fastest way to test their assumption was to build a very simple MVP. It took them just five days to build and get it into people’s hands.

“It was super scaled-down,” Marie recalled, “because there was one core thing we wanted to test. The MVP let you connect to five different apps and search for documents across those apps. That was it. It was just a search box. And what we really wanted to understand was, is a search box going to solve this problem?”

As they suspected, the answer was no. But unlike with their previous products, they had evidence to back up that hunch. It freed them up to begin iterating on better solutions. Every week, they talked to about 10 people who used their MVP and discussed the experience these people were having with it. They learned that many people don’t remember how the docs are named, which app they are in, or what was written inside. A search box alone doesn’t solve the problem of finding documents.

Meanwhile, they researched competitors extensively. Who were they? How successful had they been? When one of them failed, what made them go under?

What they found was that a number of similar companies had either died or been acquired. If they wanted FYI to thrive, they needed to create the best way for people to find documents — and that had to go beyond just a search box. They needed a solution that could work for even the busiest and most forgetful businesspeople.

After putting out the MVP and learning that it wasn’t what people needed, they shut it down and started from scratch by doing user testing on prototypes of the user experience.

“We just kept doing research and iterating an interface until we got to what it looks like today,” she said. Then, the real early access launch of FYI began."

Marie’s Top 3 Research Methods During Early Access

Marie does the research for FYI herself. While she loves testing all kinds of methods, these three are the cornerstones of her efforts:

- Surveys

- User interviews

- User testing (with clickable prototypes)

She uses those methods not only to test FYI’s progression but also to test and learn more about how real people respond to competitors’ UI and marketing messages.

“In the early access survey, we always ask a question about competitors. And then in the interviews, we always ask people what apps they're using and how they are solving the problem,” she said.

“In user tests, we actually like to test our competitors. Whether it's their marketing or the actual product, that lets us learn a ton about competitors. But more importantly, it lets us learn about the customers, because we start to understand what value props resonate with customers, what do customers care about, what our customer's problems are... So whenever we look at a competitor, it's always with the lens of the customer,” she continued.

Surveys

Marie finds surveys to be particularly helpful in doing generative research at the beginning of early access. The goal is to make sure you’re addressing the right problems in a way that will inspire user retention. It’s also a great way to screen for good interview candidates for further study. She also sneaks in some competitor research. In one of the sections below, we’ll dive into the questions she asks to make that happen.

User Interviews

Marie loves to use interviews to follow up on survey answers. They’re valuable for more than one reason, but her primary reason for conducting them is to understand the context and motivations behind what her customers say.

“I would say digging into customer stories is the most essential part of early access,” she emphasized. “It’s the stories that always lead to the most surprising insights.”

For example, when they were testing their MVP, they’d ask people to tell them about a specific time when they couldn’t find a document. The stories often looked a lot like this:

- John needs to find a document he made for a big upcoming customer presentation. He remembers which app he put it in, but can’t remember the title. And there are just so many documents there already.

- He remembers Josie was involved, so he Slacks her. She doesn’t have it but remembers that Steve was involved.

- John Slacks Steve, and Steve shares the document with him.

The takeaway?

“We learned that, when people can’t find documents, they’ll do a quick search sometimes, but oftentimes they go right into Slack or tap someone on the shoulder and ask them where the document is. We wouldn't have learned that had we not really dug into the stories."

That insight led to one of FYI’s features: the ability to find documents based on the people who created or shared them.

User Testing Prototypes

What would a good early access (beta) period be without some prototype testing? Marie likes to collect feedback on clickable prototypes of competitors’ offers and content as much as the actual features under development for FYI.

Her favorite question to ask during a user testing session is, “Is there anything confusing on the screen?” It forces people to take an extra moment to reflect on what’s before them. If they don’t know what a button does, or why they can’t find something they’d expect to see, then they’ll usually bring that up.

Note: Have enough users, but don’t want to spam the same people over and over with survey and interview requests? Keep track of them with Research Hub: Bring Your Own. You can recruit, schedule, and pay your users all in one place.

Beta Testing Survey Questions for Products You’re Ready to Launch

Marie runs several surveys throughout the course of a beta testing period. During early access as a whole, they’re hoping to learn the following:

- Improved understanding of the customer’s problem

- Gauge pricing

- NPS for them and competitors

- Which features are most valuable

- What marketing copy works best

Keep in mind that they can only get the more granular information and use it in a helpful way because they’ve already done the idea-validating research described earlier in this post (their research around Draftsend and testing the MVP of FYI).

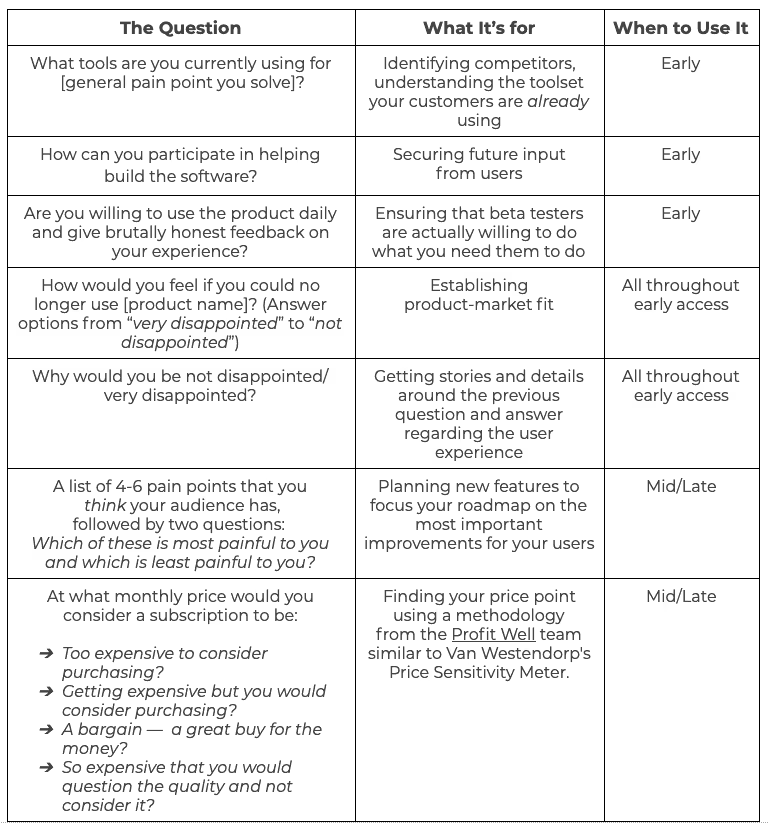

These questions are a good start, but it’s important to customize them and add more based on what you’re doing and what you hope to learn.

“When you write the questions, remove bias as much as possible. For example, if you’re asking what their biggest challenge is, you want to make sure that you're not getting really specific in the way you ask that question,” she said.

“For us in Draftsend, instead of asking, ‘What's your biggest problem with sharing and creating documents,’ we could've said, ‘What's your biggest problem when you're sharing documents and adding audio?’ It would have completely skewed the results. We would have missed the actual problem. We’d never have built FYI. So it's important to look at your survey after you've written it and make sure that you're not introducing any bias with the questions that you ask."

With that in mind, here are the questions that Marie uses:

Bonus: Questions to Use During User Interviews

Here are a few questions that Marie likes to ask the users who agree to an interview.

- How's your experience been with the product so far?

- Have you shared the product with anyone yet? Why did you share it? How did you share it? Or, why didn't you do that? (Note: this teases out what they think is missing from the product or what they love most about it.)

- Is there anything I should have asked you today that I didn’t?

Gauging Your Results

So once you’ve done the legwork — collected your findings and pored over the results — how do you know it’s time to exit early access and formally release your product?

Marie cares about three main signals:

- Retention rates: How often are people using the tool? Are they using it consistently?

- Product/market fit: She looks for over 40% of users to indicate that they would be ‘very disappointed’ if the tool were no longer available.

- Buzz about the tool: She keeps an eye out for Twitter mentions and other signs on social media and news channels that early adopters are talking about and sharing FYI.

Launching the tool after early access doesn’t have to mean you’ve iterated to your final product. It just means the early access (beta) version improved enough for users to embrace it on the market. It’s wise to continue research after closing early access.

As for FYI, Marie and Hiten just launched early access for their next phase: team-based access to FYI. If you’re curious, you can request access here.

Marie can’t wait to begin interacting with people, interpreting research, and planning FYI’s next steps.

“I think early access is one of the most exciting parts of product development. It's a time where you can hone in on the customer, their problems, and how you solve them the best way possible. You get to interact with customers so much. Just have fun with it instead of making it a stressful undertaking. It can be a more fun, lighthearted undertaking, and I would encourage people to make it like that."

About to start beta testing? User Interviews offers a complete platform for finding and managing user research participants. Tell us who you want, and we’ll get them on your calendar. Sign up for free. You’ll only be charged for people who actually take part in your study.