Recruiting for qual is way harder than quant. You don't just need a person; you need the right, articulate person who can explain their "why." The secret is your screener. Stop asking leading questions and always include an "articulation question" (e.g., "Describe the last book you read and how you felt about it") to check if they can give you more than one-word answers. This guide breaks down the methods (DIY is cheap/slow, agencies are pricey, platforms are fast/targeted) and common mistakes (like low-balling incentives—don't). Oh, and always, always have backups for no-shows.

Recruiting high-quality (read: engaged, relevant, verified) participants for qualitative research can be more difficult than recruiting for quantitative research, because:

- Audience criteria tends to be more specific

- Participants need to be articulate and expressive enough to describe their motivations

- Qualitative studies often require more time, effort, and emotional engagement from participants

- There are more opportunities for introducing bias

But just because recruiting is difficult doesn’t mean it’s impossible.

At User Interviews, our customers successfully recruit participants for qualitative research all the time. In fact, that’s why we exist: To simplify participant recruitment with advanced targeting, automated recruitment workflows, and a panel that scales with demand.

We’ve learned expert secrets and industry best practices for effective recruitment in qualitative research—and whether you’re a User Interviews customer or not, we’d like to share them with you here.

In this article, we’ll look at:

- Methods and strategies for recruiting qualitative research participants

- How to recruit participants for qualitative UX research studies

- Common recruitment mistakes to watch out for

- How User Interviews expedites participant recruitment without sacrificing quality

📣 Are you ready to start recruiting now? User Interviews is the only tool that lets you source, screen, track, and pay participants from your own panel, or from our 4-million-strong network. Sign up free today.

👀 At a glance

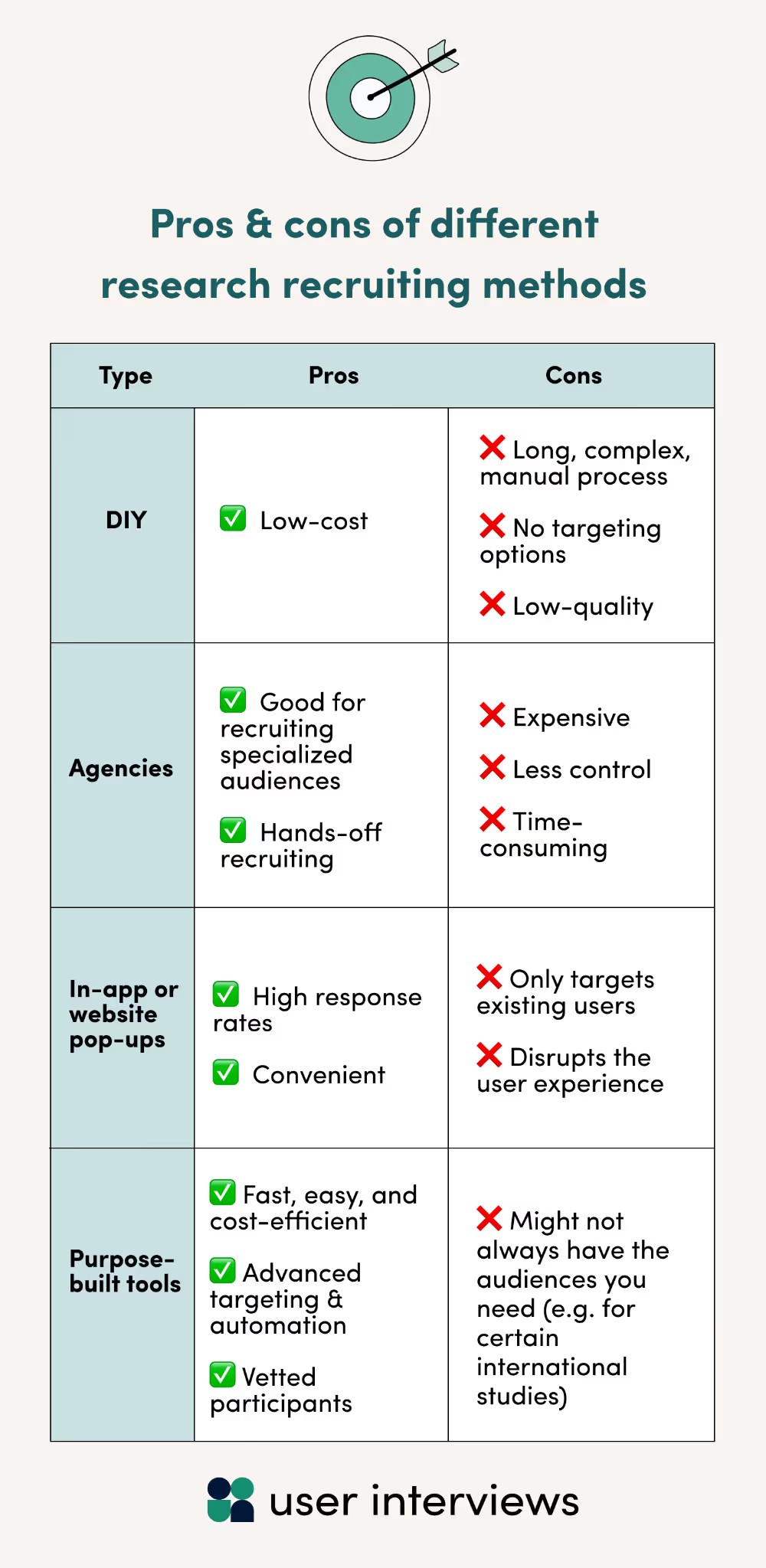

You can recruit participants for qualitative research using DIY channels like social media, recruiting agencies, in-app pop-up surveys, or a purpose-built tool like User Interviews. However, each of these have their own pros and cons.

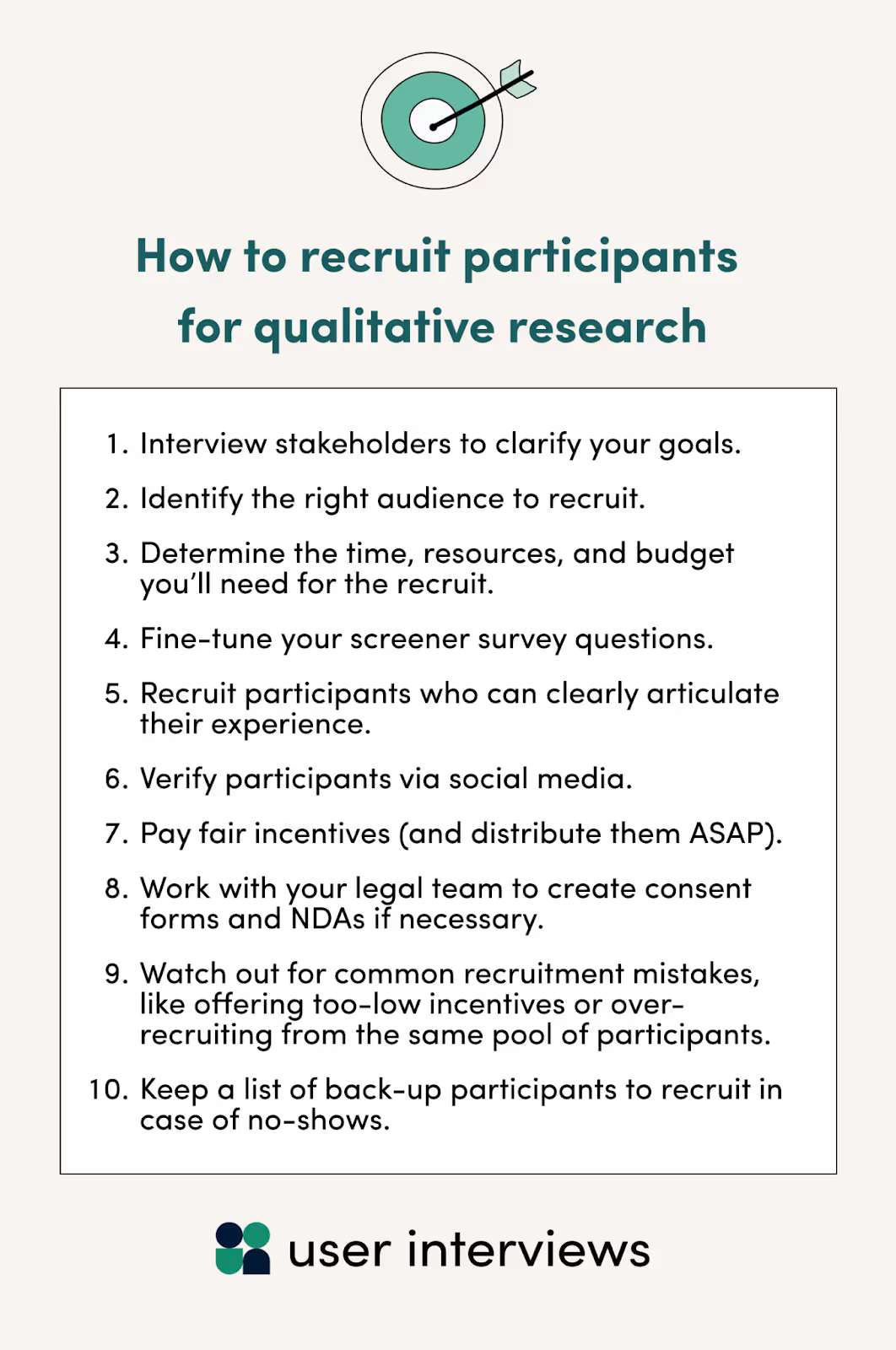

Whichever method you choose, the key steps and best practices you’ll need to follow to ensure a smooth recruitment process include:

- Interview stakeholders to clarify your goals.

- Identify the right audience to recruit.

- Determine the time, resources, and budget you’ll need for the recruit.

- Fine-tune your screener survey questions. (Hot tip: use our screener survey grader tool!)

- Recruit participants who can clearly articulate their experience.

- Verify participants via social media.

- Pay fair incentives (and distribute them ASAP).

- Work with your legal team to create consent forms and NDAs if necessary.

- Watch out for common recruitment mistakes, like offering too-low incentives or over-recruiting from the same pool of participants.

- Keep a list of back-up participants to recruit in case of no-shows.

What are your options? Recruitment methods for qualitative research

There’s no one-size-fits-all approach to recruiting research participants, but there are pros and cons to each. Here’s a brief breakdown of different recruitment methods.

- DIY recruitment: If your research budget is extremely tight (nonexistent) or your sample size incredibly small (1–2 participants), then DIY recruiting tools like Craigslist or social media can be a great, low-cost option. However, it’ll be difficult to reach the right audiences without the targeting capabilities afforded by the other methods, and DIY just isn’t an efficient option at scale.

- Recruiting agencies: Agencies might be a good option for researchers looking for highly niche or specialized audiences—but it’ll cost ya. Agency recruiting is one of the most expensive methods, in terms of both time and money. Plus, you won’t have much control over the screening process. That’s great for researchers with very little time on their hands, but it could result in mismatches.

- In-app or website pop-ups: Some recruiting tools offer intercept tools which recruit participants directly from your app or website. You’ll probably get a high response rate using these, but you’ll only reach folks who are already using your product or service. Plus, you risk annoying users with the disruption.

- Using a purpose-built tool like User Interviews: Purpose-built research recruiting tools give you more control over the recruiting process, with advanced targeting features and a large pool of vetted participants. Plus, tools like ours can support teams of every size, so you can count on us while you scale. Compare the ROI of User Interviews vs. other recruiting methods here.

Check out our Participant Recruitment Platform Buyer's Guide

How to recruit participants for qualitative research studies

Before you start your recruitment process, you need to have a strong understanding of what you’re trying to discover with your research. You’ll use that information to write screener survey questions, set appropriate incentives, and secure eligible participants.

Here are the steps to follow for an efficient, effective recruitment process:

- Interview stakeholders to clarify your goals.

- Identify the right audience to recruit.

- Determine the time, resources, and budget you’ll need for the recruit.

- Fine-tune your screener survey questions.

- Recruit participants who can clearly articulate their experience.

- Verify participants via social media.

- Pay fair incentives (and distribute them ASAP).

- Work with your legal team to create consent forms and NDAs if necessary.

- Watch out for common recruitment mistakes.

- Keep a list of back-up participants to recruit in case of no-shows.

1. Interview stakeholders to clarify your goals.

Holding a stakeholder interview is critical pre-recruitment work if you’re collaborating with other teams on your project. Sometimes, a research request is well documented and straightforward. Other times, it may not have enough information, or the request may not match what you suspect the real need is.

Ask stakeholders questions like:

- What is this project's objective, in your view?

- What does success look like for this project?

- What concerns do you have about this project?

- What problem are we trying to solve for the user?

- How could improved performance translate to revenue?

For more info on why talking with stakeholders prior to recruiting is critical (and how to approach that discussion), check out these resources:

- Internal Stakeholder Interviews for User Research - From the UX Research Field Guide

- Getting Stakeholder Buy-In for User Research at a Large Organization with Meg Pullis Roebling of BNY Mellon

- How to Get Stakeholder Buy-In Using Linguistic Mirroring

- The UX ROI & Incentive Calculator

2. Identify the right audience to recruit.

Okay, so who do you actually want to talk to? Here are some key questions you need to ask yourself about your target audience.

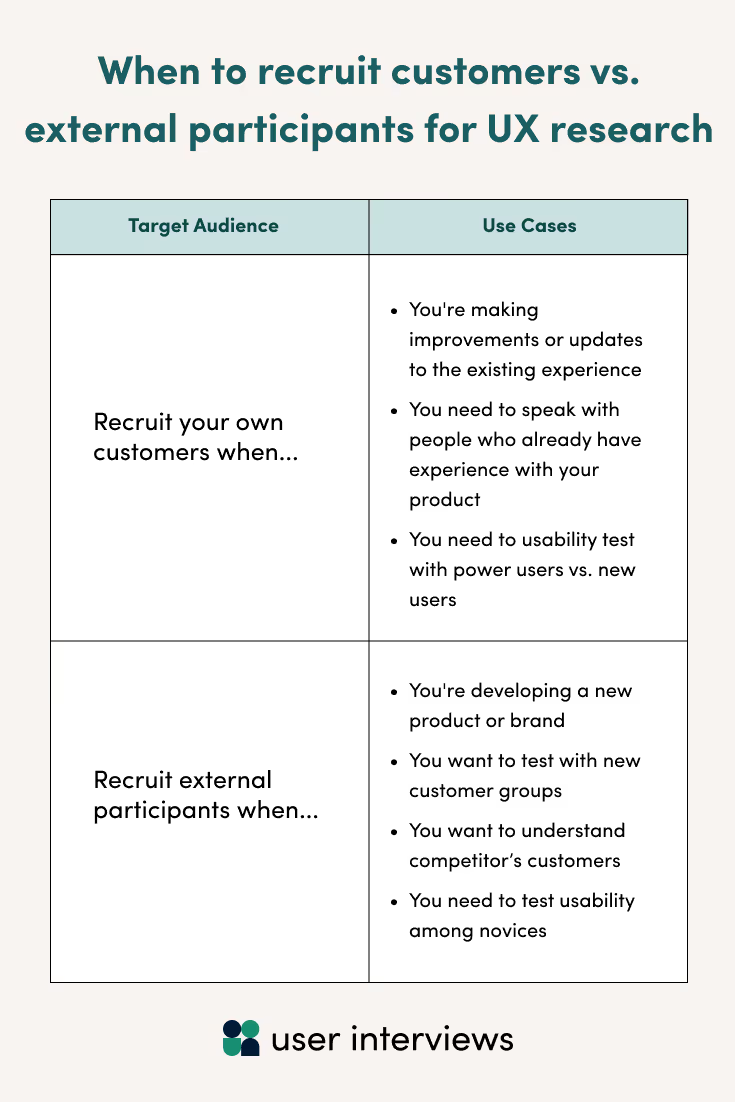

- Do I need to recruit customers or non-customer participants?

- Do I need to recruit professional or consumer audiences?

- What demographic, technical, behavioral, and attitudinal characteristics do I need to look for?

- How do I account for diversity and accessibility to ensure I recruit a representative sample?

- What sample size do I need to achieve statistical significance?

The infographic below can help you decide whether you need to recruit customers or non-customers. If you have multiple goals or use cases, you might need to recruit a mix of both.

As for your sample size, here’s some advice from Kate Moran of Nielsen Norman Group on the Awkward Silences podcast:

“A lot of times we advise people that if you're doing qualitative [user testing], you can get away with anywhere from 5 to 10 participants. Because in a qualitative study, we want to find out what's wrong with this thing, what's not working, and we want to get ideas [to help us] fix it.”

3. Determine the time, resources, and budget you’ll need for the recruit.

Different types of recruits can add time, cost, and complexity to your project.

For example, remote studies are usually lower cost than in-person studies, and it can be quicker to recruit remote participants than those who are close enough to come into the lab. Participants with different demographics, physical abilities, or socioeconomic status can level up or down the cost and timeline of your recruit as well.

Niche participants can take more time to find—but tools like User Interviews can cut recruitment time down substantially. As Leo Smith, Director of User Research at a large insurance company, says on the Awkward Silences podcast, using a recruiting service like User Interviews can lead to massive time savings:

“We were looking at how long on average it was taking us to recruit for a study [on our own]. And it was anywhere between 25 and 40 hours in total across multiple people, across different teams…. generally speaking, [using a recruiting service] was an 80% time savings…. It was so clear, in terms of what this tool was going to cost us versus how many hours it was going to save. It was so blindly obvious that it was going to be a massive return on time invested.”

💸 Learn more: How to Save Money on User Research Recruiting

4. Fine-tune your screener survey questions.

Effective screener surveys should be brief, neutral, and carefully structured to weed out unqualified or deceptive participants.

Upload or paste your screener to our screener survey grader tool—and voila!—our fancy grading wizard will work its magic, providing feedback on best practices and identifying improvements to help you make it better than ever.

Here are some tips to keep in mind:

- Include your most important criteria first to eliminate or select for potential matches as quickly as possible. Have your survey questions start with non-negotiables, then narrow your focus as you go. If you’re looking for marketing professionals who use InDesign, for example, then their experience using InDesign is non-negotiable and you should that include early on.

- Avoid asking leading questions, such as “How unhappy are you with your current banking system?” Instead, try something neutral like this: “On a scale of 1 to 5, 1 being not satisfied at all and 5 being very satisfied, please rate your satisfaction with your current banking system.”

- Include short response questions to test participants’ ability to engage critically with the questions. For example, if you asked the 1-5 scale question above, the next question could ask the participant to explain why they chose their rating.

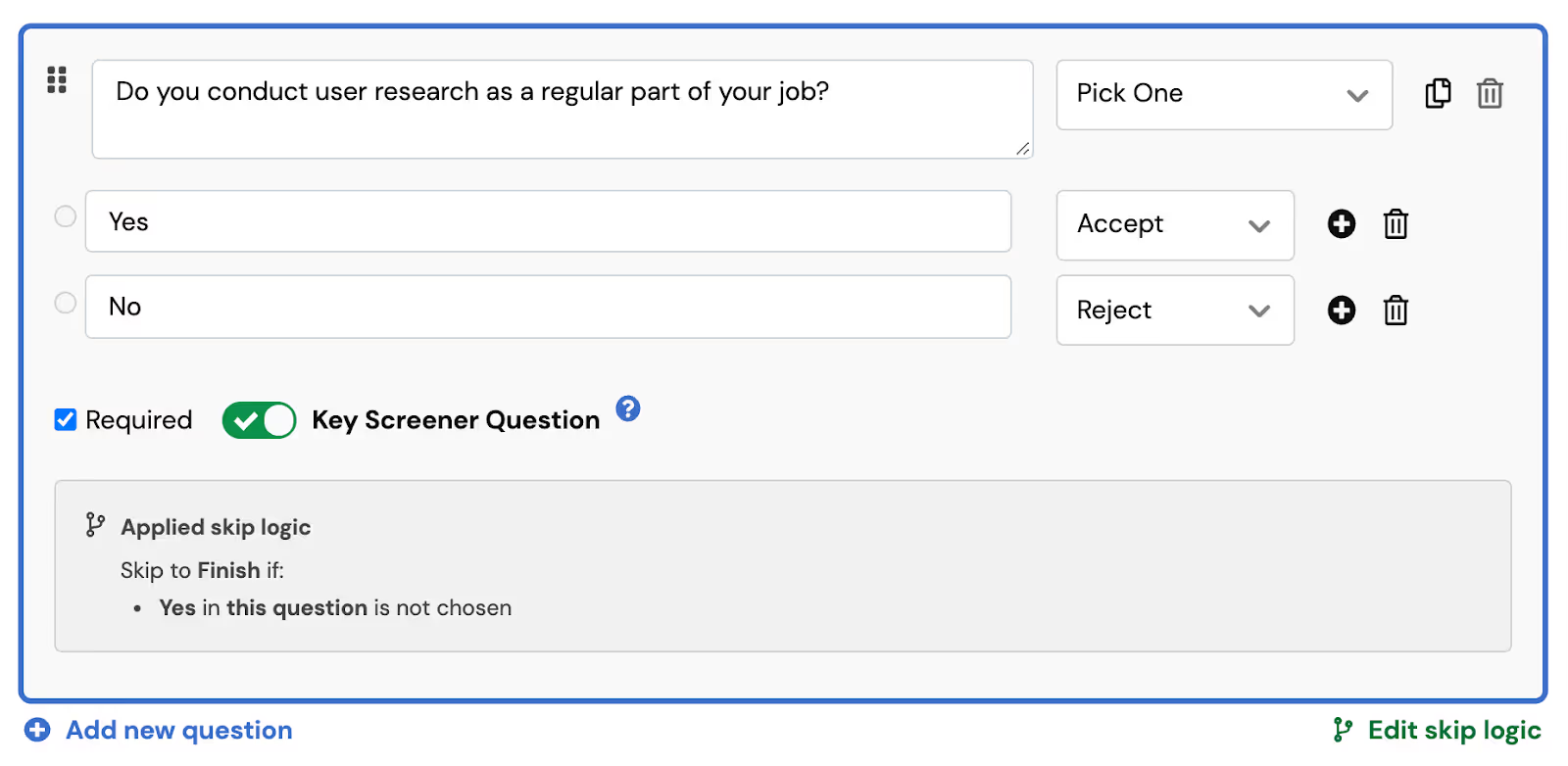

User Interviews’s screener survey tool allows you to program skip logic to automatically disqualify participants and end the screener if they don’t meet your non-negotiable criteria.

For example, the screenshot below shows a required screener question set up with skip logic in the User Interviews platform.

5. Recruit participants who can clearly articulate their experience.

Qualitative researchers are looking for the reasons behind participants’ behavior. If you’re usability testing a website, for example, you want to see your customer’s journey through the website, but you also want to know what motivated their taps and clicks.

That means you need participants who can narrate their choices and explain the logical or emotional processes that lead them down one path and not another. The trouble is, knowing whether or not a participant is articulate is probably one of the hardest parts of successful recruitment.

Use “articulation questions”—questions designed to test participants’ ability to describe what they are thinking and feeling—to find out if a participant can give you the depth of information you want.

Here are three examples you could use in your next survey:

- Describe the last book you read and how you felt about it.

- Share your thought process when you go grocery shopping.

- Tell me about the next vacation you want to take and why.

It isn’t important that these questions relate back to the study. What you’re looking for here is to see whether or not the participant answers the questions above with detail or with the bare minimum.

As Tony Turner of Progressive Insurance says on the Awkward Silences podcast:

“Focus on recruiting early. Have questions in the screener that give them an opportunity to be verbose, or not, [and learn] about them that way. [Learn] about their previous experiences and how long they've been using certain applications.”

👉 Looking for more examples of effective screener questions? Check out this big list of 70+ user testing questions to ask before, during, and after the session (including some questions to avoid).

Consider double-screening to confirm participants’ qualifications

At User Interviews, we offer Double Screening, which is the ability to speak with a participant before the actual research interview.

The double-screening process can help you confirm participants’ identities and decide whether or not they’re able to elaborate on answers enough to be helpful in your study. While this process isn’t necessary for every study, some qualitative research methods demand more articulate users than others.

If you’re trying to put together a focus group for market research, for example, make sure everyone in the room can express themselves well. Or if you have one of your stakeholders (or many stakeholders!) sitting in on the sessions, it’s nice to know for sure that the person you’re talking to is as eloquent with spoken communication as they are in their written screener responses.

6. Verify participants via social media.

Some people volunteer for user testing as a side hustle. Research participation is an easy way to make a little extra money. People who enjoy participating in research they’re a good fit for while making some spare change often make great participants.

But problems arise when “professional testers” try to game the system to get into more tests so they can make more money. Some testers use fake emails to set up multiple accounts within a platform or lie about their demographics and behaviors to get chosen for a wider variety of studies.

As a researcher, you can do some leg work to make sure the participants are who they say they are. But on your own, that can be difficult and time consuming.

User Interviews saves you time by running a number of tests to verify participants, including:

- Automated checks at sign-up to look for common fraud patterns

- Verification (and periodic re-verification) via Linkedin and Facebook

- A machine-learning fraud detection model that flags participants for suspicious activity

- Manual processes to identify and report fraud by our internal team

- Researcher ratings and reviews after meeting with participants

If you use User Interviews to recruit participants, you can view participant’s Linkedin profile to verify their identity. Due to privacy regulations, you are unable to see the participant’s Facebook profile, but you’ll still be able to see when participants are verified via Facebook.

🛡️ Have questions about our fraud measures? Book a time to chat.

7. Pay fair incentives (and distribute them ASAP).

Figuring out exactly what (and how much) to offer as an incentive can be difficult.

As a general rule, incentives should be higher for:

- Longer studies, such as diary studies or those with multiple follow-up sessions

- In-person studies, which require more time and effort from participants

- Professional participants (those who are recruited based on job title or skills)

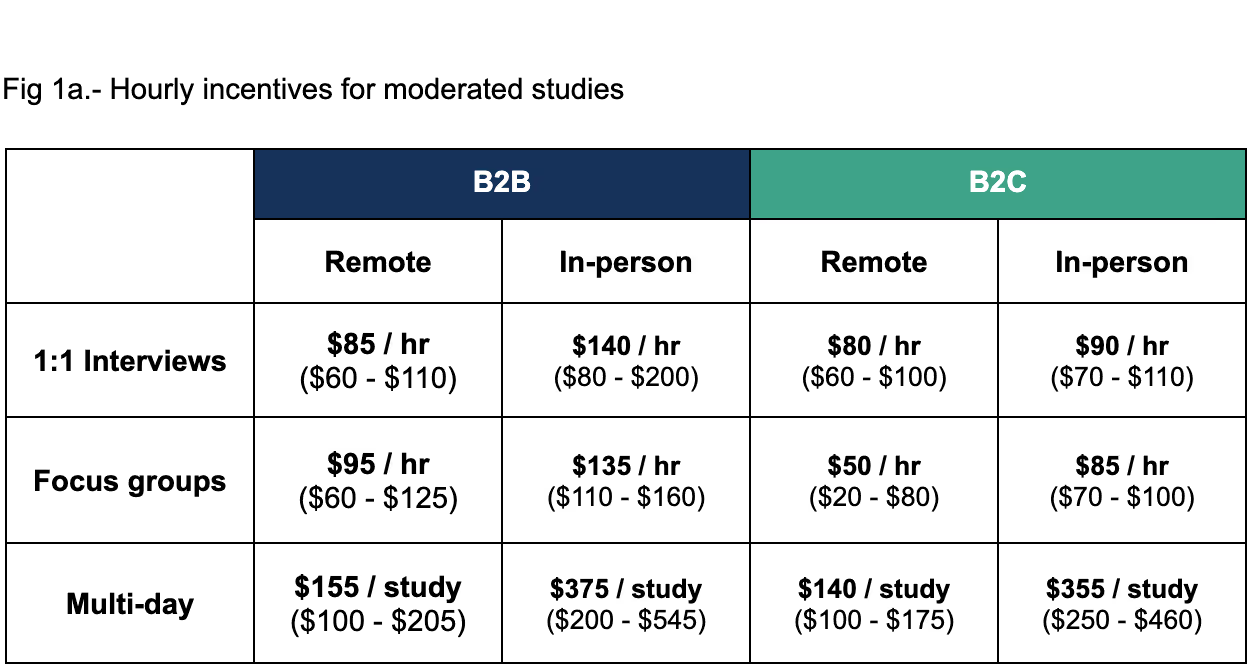

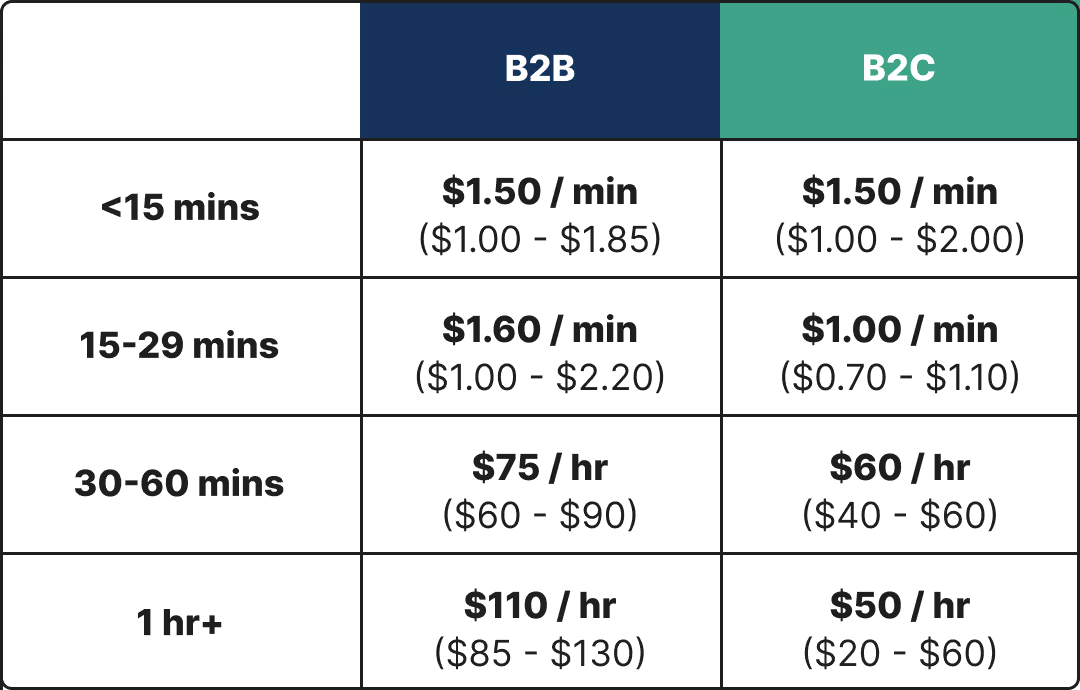

Here are some incentive cheat sheets we put together in our most recent research incentives report.

Optimal incentives for moderated studies

Optimal incentives for unmoderated studies

For a more personalized number, use our User Research Incentive Calculator to input the specific parameters for your next study and get a data-backed incentive recommendation.

It’s important to note, however, that cash-based incentives aren’t always the right way to go. As Teresa Torres, Founder of ProductTalk, says on the Awkward Silences podcast:

“I worked with a company, Snag a Job, [which is] a job board for hourly workers. So they offered $20 for a 20 minute interview. When you went to their home page, an interstitial would pop up that said [something like] "Hey, do you have 20 minutes for us? We'll pay you $20." What's nice about that offer is 20 minutes is a small amount of time. And for an hourly worker who's likely making minimum wage, $20 for 20 minutes is a high value.

Enterprise companies can do this as well. If you're [a company like] Salesforce, and people work in your product all day every day, this type of recruiting will also work. But $20 probably isn't going to cut it.

In fact, I think for enterprise clients, cash is rarely the right reward. You have to look at: what's something valuable that you can offer? And it could be anything from inviting them to an invite-only webinar [to] giving them a discount on their subscription for a month [to] giving them access to a premium helpline."

💰 Keeping track of incentive payouts can be a hassle—and forgetting to pay a high-quality participant makes a terrible impression. If you’re using User Interviews, you can choose to set up automatic incentive distribution for completed sessions. That way, you can focus on analyzing the results while we quickly compensate your participants.

8. Work with your legal team to create consent forms and NDAs if necessary.

The safety, legality, and ethics of your research are essential.

Basically all research involving human beings—especially for clinical studies or those involving minors—consent forms are required by law. NDAs (or non-disclosure agreements) aren’t always necessary, but your company might require them to keep participants from leaking confidential information.

Ask your legal team how to approach these forms. Do not skip this step, do not pass go, and certainly do not move forward with your research until you’ve collected all the necessary legal documents.

💡 Did you know? You can automate signature collection for key legal documents using User Interviews’s Document Signing Add-On. Simply upload the document when you launch a project, and all participants will be required to sign it before scheduling their session.

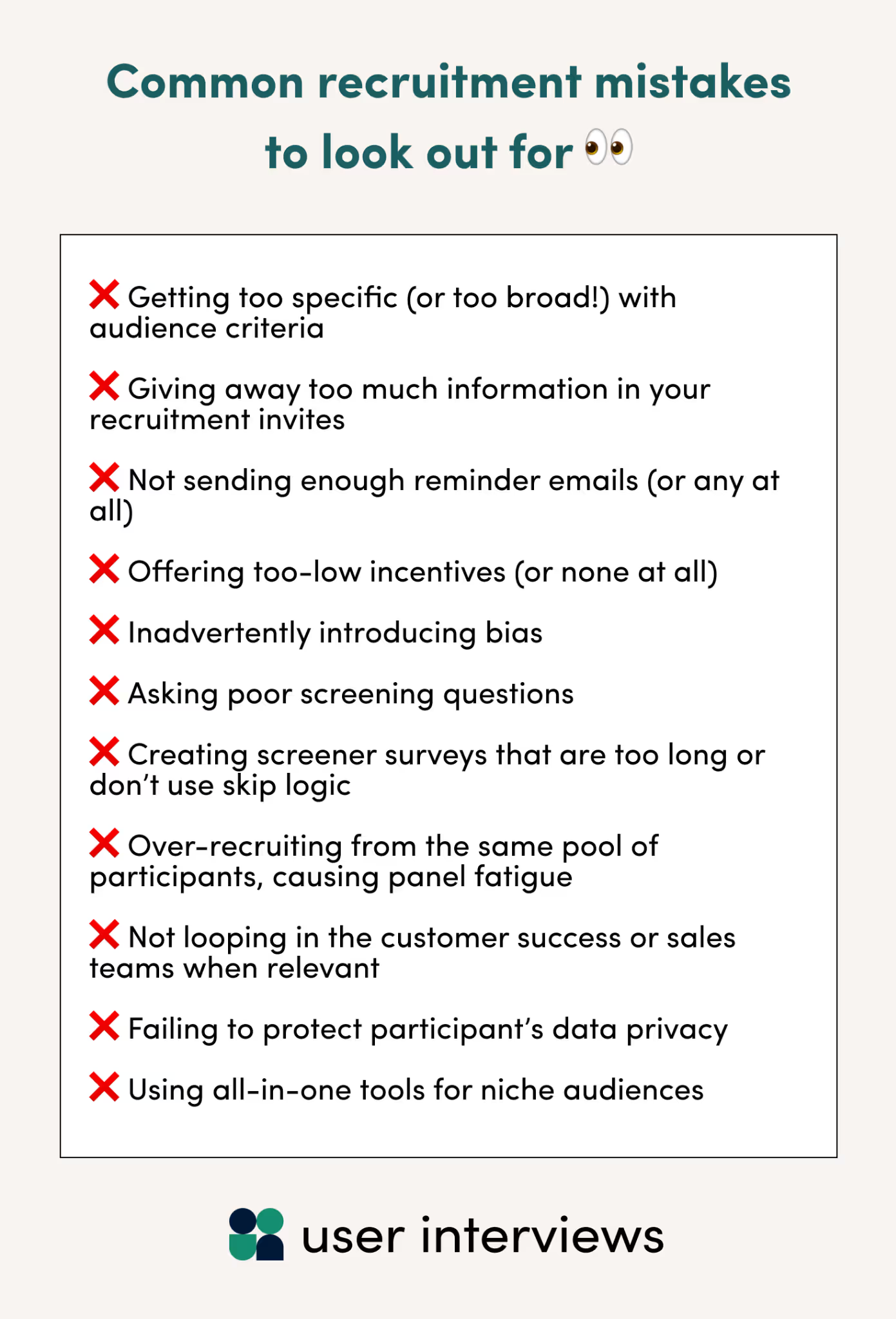

Common recruitment mistakes to watch out for

Recruiting isn’t easy. If you aren’t careful, even seemingly small mistakes can allow poor-fit participants to slip through the cracks, skewing the results of your entire study.

Here are some of the most common recruiting mistakes to keep an eye out for.

- Getting too specific (or too broad!) with audience criteria

- Giving away too much information in your recruitment invites

- Not sending enough reminder emails (or any at all)

- Offering too-low incentives (or none at all)

- Inadvertently introducing bias

- Asking poor screening questions

- Creating screener surveys that are too long or don’t use skip logic

- Over-recruiting from the same pool of participants, causing panel fatigue

- Not looping in the Customer Success or sales teams when relevant

- Failing to protect participant’s data privacy

- Using all-in-one tools for niche audiences

💝 Psst—if you’re new to research recruiting or anxious about messing it up, User Interviews pairs every customer with a Project Coordinator who helps you manage your projects from start to finish. Learn more about our support teams.

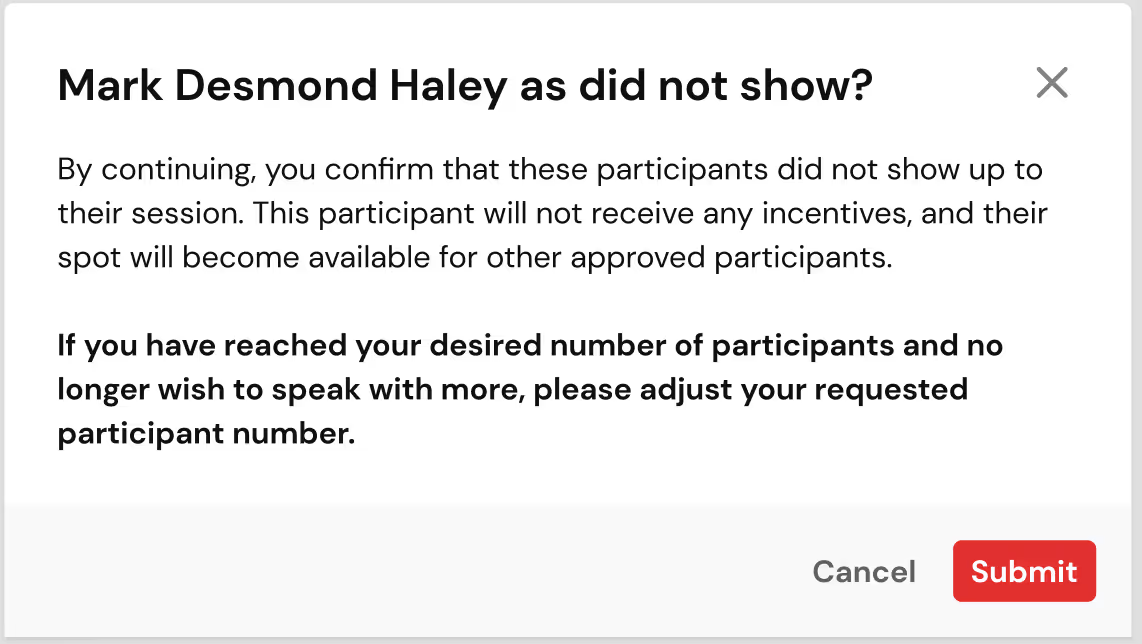

Worried about no-shows? Keep a list of back-up participants to recruit from just in case

No-shows happen, sometimes through no fault of your own or the participant’s.

But no matter the reason, a no-show hinders your ability to complete your research. Because of this, we recommend you keep a list of potential back-ups of participants you can contact to fill any open spots.

You can do this directly in the User Interviews platform by rating screener respondents as “poor fit,” “potential fit” or “best fit.” Schedule your study with your “best fit” participants, and keep a list of “potential fits” as backups.

If the worst does happen and a participant doesn’t show up for your study, mark them as a no-show. We’ll never make you pay for sessions that didn’t work out—and if you’ve already approved extra participants they’ll be automatically scheduled to make up for the cancellation. With this process, your study is more likely to start on time.

📚 Further reading: 16 Ways to Reduce No-Shows in UX Research

Recruit your first qualified participant in hours with User Interviews

User Interviews is the fastest, easiest way to recruit research participants. In fact, our median time to your first matched participant is only one hour.

Why? Recruit’s growing panel of over 4 million verified participants gives you access to the audiences you need, no matter how niche. You can launch a project in minutes, get matched with your first qualified participants within hours, and complete your research within days.

We provide:

- Advanced targeting capabilities and ML-powered matching algorithm to help you find the audiences you need—whether you’re searching for a consumer audience, a professional niche, or segmenting your own customers for outreach

- Automated recruitment workflows to eliminate busywork like scheduling sessions and distributing incentives and help you get faster results

- Guardrails, admin controls, and endless customizations to help you stay coordinated as you scale

- Secure, enterprise-grade software that’s SOC2 certified, includes privacy controls, and supports SSO and 2FA

⭐ Best of all, you can get started for free right out of the box, and we’re already integrated with all of your favorite testing tools.

_1.avif)